Compare commits

No commits in common. "7fbbc5d3d39c6897eb268b0f4559f30f95e8bf29" and "45eefaa5871fe8448d87f2e6be85271b5fb6f3ca" have entirely different histories.

7fbbc5d3d3

...

45eefaa587

|

|

@ -1,46 +0,0 @@

|

|||

---

|

||||

name: image-crop

|

||||

description: Crop images and get image metadata using sharp library. Use when user asks to crop, trim, cut an image, or get image dimensions/info. Supports JPEG, PNG, WebP.

|

||||

---

|

||||

|

||||

# Image Crop Skill

|

||||

|

||||

Image manipulation using the sharp library.

|

||||

|

||||

## Scripts

|

||||

|

||||

### Get Image Info

|

||||

|

||||

```bash

|

||||

node scripts/image-info.js <image-path>

|

||||

```

|

||||

|

||||

Returns dimensions, format, DPI, alpha channel info.

|

||||

|

||||

### Crop Image

|

||||

|

||||

```bash

|

||||

node scripts/crop-image.js <input> <output> <left> <top> <width> <height>

|

||||

```

|

||||

|

||||

Parameters:

|

||||

- `left`, `top` - offset from top-left corner (pixels)

|

||||

- `width`, `height` - size of crop area (pixels)

|

||||

|

||||

## Workflow Example

|

||||

|

||||

1. Get dimensions first:

|

||||

```bash

|

||||

node scripts/image-info.js photo.jpg

|

||||

# Size: 1376x768

|

||||

```

|

||||

|

||||

2. Calculate crop (e.g., remove 95px from top and bottom):

|

||||

```bash

|

||||

# new_height = 768 - 95 - 95 = 578

|

||||

node scripts/crop-image.js photo.jpg photo-cropped.jpg 0 95 1376 578

|

||||

```

|

||||

|

||||

## Requirements

|

||||

|

||||

Node.js with sharp (`npm install sharp`)

|

||||

|

|

@ -1,29 +0,0 @@

|

|||

#!/usr/bin/env node

|

||||

|

||||

const sharp = require('sharp');

|

||||

|

||||

const args = process.argv.slice(2);

|

||||

|

||||

if (args.length < 6) {

|

||||

console.error('Usage: crop-image.js <input> <output> <left> <top> <width> <height>');

|

||||

console.error('Example: crop-image.js photo.jpg cropped.jpg 0 95 1376 578');

|

||||

process.exit(1);

|

||||

}

|

||||

|

||||

const [input, output, left, top, width, height] = args;

|

||||

|

||||

sharp(input)

|

||||

.extract({

|

||||

left: parseInt(left, 10),

|

||||

top: parseInt(top, 10),

|

||||

width: parseInt(width, 10),

|

||||

height: parseInt(height, 10)

|

||||

})

|

||||

.toFile(output)

|

||||

.then(info => {

|

||||

console.log(`Saved: ${output} (${info.width}x${info.height})`);

|

||||

})

|

||||

.catch(err => {

|

||||

console.error('Error:', err.message);

|

||||

process.exit(1);

|

||||

});

|

||||

|

|

@ -1,24 +0,0 @@

|

|||

#!/usr/bin/env node

|

||||

|

||||

const sharp = require('sharp');

|

||||

|

||||

const input = process.argv[2];

|

||||

|

||||

if (!input) {

|

||||

console.error('Usage: image-info.js <image-path>');

|

||||

process.exit(1);

|

||||

}

|

||||

|

||||

sharp(input)

|

||||

.metadata()

|

||||

.then(m => {

|

||||

console.log(`Size: ${m.width}x${m.height}`);

|

||||

console.log(`Format: ${m.format}`);

|

||||

if (m.density) console.log(`DPI: ${m.density}`);

|

||||

if (m.hasAlpha) console.log(`Alpha: yes`);

|

||||

if (m.orientation) console.log(`Orientation: ${m.orientation}`);

|

||||

})

|

||||

.catch(err => {

|

||||

console.error('Error:', err.message);

|

||||

process.exit(1);

|

||||

});

|

||||

|

|

@ -1,30 +0,0 @@

|

|||

---

|

||||

name: upload-image

|

||||

description: Upload images to Banatie CDN. Use when user asks to upload an image, get CDN URL, or publish image to CDN. Supports JPEG, PNG, WebP.

|

||||

argument-hint: <image-path>

|

||||

---

|

||||

|

||||

# Upload Image to Banatie CDN

|

||||

|

||||

Upload images to the Banatie CDN and get the CDN URL.

|

||||

|

||||

## Requirements

|

||||

|

||||

- `BANATIE_API_KEY` in `.env` file

|

||||

- Dependencies: tsx, form-data, dotenv

|

||||

|

||||

## Usage

|

||||

|

||||

```bash

|

||||

tsx .claude/skills/upload-image/scripts/upload.ts <image-path>

|

||||

```

|

||||

|

||||

## Output

|

||||

|

||||

- ID: unique image identifier

|

||||

- URL: CDN URL for the image

|

||||

- Size: dimensions (if available)

|

||||

|

||||

## Supported formats

|

||||

|

||||

JPEG, PNG, WebP

|

||||

|

|

@ -1,117 +0,0 @@

|

|||

import * as fs from "fs";

|

||||

import * as path from "path";

|

||||

import * as https from "https";

|

||||

import "dotenv/config";

|

||||

import FormData from "form-data";

|

||||

|

||||

const API_URL = "https://api.banatie.app/api/v1/images/upload";

|

||||

|

||||

interface UploadResponse {

|

||||

success: boolean;

|

||||

data: {

|

||||

id: string;

|

||||

storageUrl: string;

|

||||

alias?: string;

|

||||

source: string;

|

||||

width: number | null;

|

||||

height: number | null;

|

||||

mimeType: string;

|

||||

fileSize: number;

|

||||

};

|

||||

}

|

||||

|

||||

function getMimeType(filePath: string): string {

|

||||

const ext = path.extname(filePath).toLowerCase();

|

||||

const mimeTypes: Record<string, string> = {

|

||||

".jpg": "image/jpeg",

|

||||

".jpeg": "image/jpeg",

|

||||

".png": "image/png",

|

||||

".webp": "image/webp",

|

||||

};

|

||||

return mimeTypes[ext] || "application/octet-stream";

|

||||

}

|

||||

|

||||

async function uploadImage(filePath: string): Promise<UploadResponse> {

|

||||

const apiKey = process.env.BANATIE_API_KEY;

|

||||

if (!apiKey) {

|

||||

throw new Error("BANATIE_API_KEY not found in environment");

|

||||

}

|

||||

|

||||

const absolutePath = path.resolve(filePath);

|

||||

if (!fs.existsSync(absolutePath)) {

|

||||

throw new Error(`File not found: ${absolutePath}`);

|

||||

}

|

||||

|

||||

const fileName = path.basename(absolutePath);

|

||||

const mimeType = getMimeType(absolutePath);

|

||||

|

||||

const form = new FormData();

|

||||

form.append("file", fs.createReadStream(absolutePath), {

|

||||

filename: fileName,

|

||||

contentType: mimeType,

|

||||

});

|

||||

|

||||

return new Promise((resolve, reject) => {

|

||||

const url = new URL(API_URL);

|

||||

|

||||

const req = https.request(

|

||||

{

|

||||

hostname: url.hostname,

|

||||

port: 443,

|

||||

path: url.pathname,

|

||||

method: "POST",

|

||||

headers: {

|

||||

"X-API-Key": apiKey,

|

||||

...form.getHeaders(),

|

||||

},

|

||||

},

|

||||

(res) => {

|

||||

let data = "";

|

||||

res.on("data", (chunk) => (data += chunk));

|

||||

res.on("end", () => {

|

||||

try {

|

||||

const json = JSON.parse(data);

|

||||

if (res.statusCode === 200 || res.statusCode === 201) {

|

||||

resolve(json);

|

||||

} else {

|

||||

reject(

|

||||

new Error(`Upload failed (${res.statusCode}): ${data}`)

|

||||

);

|

||||

}

|

||||

} catch {

|

||||

reject(new Error(`Invalid response: ${data}`));

|

||||

}

|

||||

});

|

||||

}

|

||||

);

|

||||

|

||||

req.on("error", reject);

|

||||

form.pipe(req);

|

||||

});

|

||||

}

|

||||

|

||||

async function main() {

|

||||

const filePath = process.argv[2];

|

||||

|

||||

if (!filePath) {

|

||||

console.error("Usage: pnpm upload:image <path-to-image>");

|

||||

process.exit(1);

|

||||

}

|

||||

|

||||

try {

|

||||

console.log(`Uploading: ${filePath}`);

|

||||

const result = await uploadImage(filePath);

|

||||

|

||||

console.log(`\nSuccess!`);

|

||||

console.log(`ID: ${result.data.id}`);

|

||||

console.log(`URL: ${result.data.storageUrl}`);

|

||||

if (result.data.width && result.data.height) {

|

||||

console.log(`Size: ${result.data.width}x${result.data.height}`);

|

||||

}

|

||||

} catch (error) {

|

||||

console.error("Error:", error instanceof Error ? error.message : error);

|

||||

process.exit(1);

|

||||

}

|

||||

}

|

||||

|

||||

main();

|

||||

1

.env

|

|

@ -1,4 +1,3 @@

|

|||

DATAFORSEO_API_LOGIN=regx@usul.su

|

||||

DATAFORSEO_API_PASS=4f4b51b823df234c

|

||||

DATAFORSEO_API_CREDENTIALS=cmVneEB1c3VsLnN1OjRmNGI1MWI4MjNkZjIzNGM=

|

||||

BANATIE_API_KEY=bnt_e6eb544c505922b9bfe5b088e067fc3940efff16b1b88585c5518946630d4a66

|

||||

|

|

|

|||

|

|

@ -27,10 +27,6 @@

|

|||

"whois": {

|

||||

"command": "npx",

|

||||

"args": ["-y", "whois-mcp"]

|

||||

},

|

||||

"chrome-devtools": {

|

||||

"command": "npx",

|

||||

"args": ["-y", "chrome-devtools-mcp@latest"]

|

||||

}

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -1,46 +0,0 @@

|

|||

---

|

||||

slug: ai-coding-methodologies-beyond-vibe-coding

|

||||

title: "AI Coding Methodologies: Beyond Vibe Coding"

|

||||

author: henry-technical

|

||||

status: inbox

|

||||

created: 2025-01-22

|

||||

updated: 2025-01-22

|

||||

content_type: explainer

|

||||

primary_keyword: ""

|

||||

secondary_keywords: []

|

||||

assets_folder: assets/ai-coding-methodologies-beyond-vibe-coding/

|

||||

---

|

||||

|

||||

# Idea

|

||||

|

||||

**Source:** Perplexity research on AI-assisted development terminology (Jan 2025)

|

||||

|

||||

**Concept:** Overview article covering AI coding methodologies landscape. Position vibe coding as casual/risky approach, contrast with professional techniques (Spec-Driven Development, AI-DLC, Agentic Coding, etc.).

|

||||

|

||||

**Goal:** Establish Henry's expertise in AI-assisted development space. Warm-up article for Dev.to account.

|

||||

|

||||

**Angle:** "Wide view" — Henry surveys the field and shares his practical preferences.

|

||||

|

||||

---

|

||||

|

||||

# Brief

|

||||

|

||||

*pending @strategist*

|

||||

|

||||

---

|

||||

|

||||

# Outline

|

||||

|

||||

See [outline.md](assets/ai-coding-methodologies-beyond-vibe-coding/outline.md)

|

||||

|

||||

# Draft

|

||||

|

||||

See [text.md](assets/ai-coding-methodologies-beyond-vibe-coding/text.md)

|

||||

|

||||

# SEO

|

||||

|

||||

See [seo-metadata.md](assets/ai-coding-methodologies-beyond-vibe-coding/seo-metadata.md)

|

||||

|

||||

# Activity Log

|

||||

|

||||

See [log-chat.md](assets/ai-coding-methodologies-beyond-vibe-coding/log-chat.md)

|

||||

|

|

@ -0,0 +1,52 @@

|

|||

---

|

||||

slug: midjourney-alternatives-bn-blog

|

||||

title: "Best Midjourney Alternatives in 2026"

|

||||

author: banatie

|

||||

status: planning

|

||||

created: 2026-01-12

|

||||

updated: 2026-01-12

|

||||

content_type: comparison

|

||||

channel: banatie.app/blog

|

||||

primary_keyword: "midjourney alternative"

|

||||

primary_volume: 1300

|

||||

primary_kd: 3

|

||||

secondary_keywords:

|

||||

- "midjourney alternatives"

|

||||

- "midjourney api"

|

||||

- "leonardo ai"

|

||||

- "stable diffusion"

|

||||

- "flux ai"

|

||||

- "chatgpt image generator"

|

||||

estimated_traffic: 700-1200

|

||||

---

|

||||

|

||||

# Midjourney Alternatives — Comparison Guide

|

||||

|

||||

## Summary

|

||||

|

||||

Comprehensive comparison of AI image generation tools as Midjourney alternatives. Covers UI-first services, open source options, API-first platforms, and aggregators.

|

||||

|

||||

**Strategic value:** Ultra-low KD (3), solid volume (1,300), quick win for domain authority.

|

||||

|

||||

**Banatie positioning:** API-First Platforms section — developer workflow native with MCP integration.

|

||||

|

||||

---

|

||||

|

||||

## Assets

|

||||

|

||||

- `assets/midjourney-alternatives-bn-blog/brief.md` — full brief with structure and requirements

|

||||

|

||||

---

|

||||

|

||||

## Log

|

||||

|

||||

### 2026-01-12 — @strategist

|

||||

Created brief. Consolidated from three inbox ideas. Keyword research completed via DataForSEO ($0.35 spent).

|

||||

|

||||

Categories defined:

|

||||

1. Models with Native Service (UI-First)

|

||||

2. Open Source / Self-Hosted

|

||||

3. API-First Platforms ← Banatie here

|

||||

4. Aggregators (Multi-Model)

|

||||

|

||||

Next: @architect creates outline.

|

||||

|

|

@ -1,156 +0,0 @@

|

|||

---

|

||||

slug: beyond-vibe-coding

|

||||

title: "Beyond Vibe Coding: Professional AI Development Methodologies"

|

||||

author: henry-technical

|

||||

status: drafting

|

||||

created: 2026-01-22

|

||||

updated: 2026-01-24

|

||||

content_type: explainer

|

||||

primary_keyword: "ai coding methodologies"

|

||||

secondary_keywords: ["spec driven development", "ai pair programming", "human in the loop ai", "ralph loop"]

|

||||

assets_folder: assets/beyond-vibe-coding/

|

||||

---

|

||||

|

||||

# Idea

|

||||

|

||||

**Source:** Perplexity research on AI-assisted development terminology (Jan 2026)

|

||||

|

||||

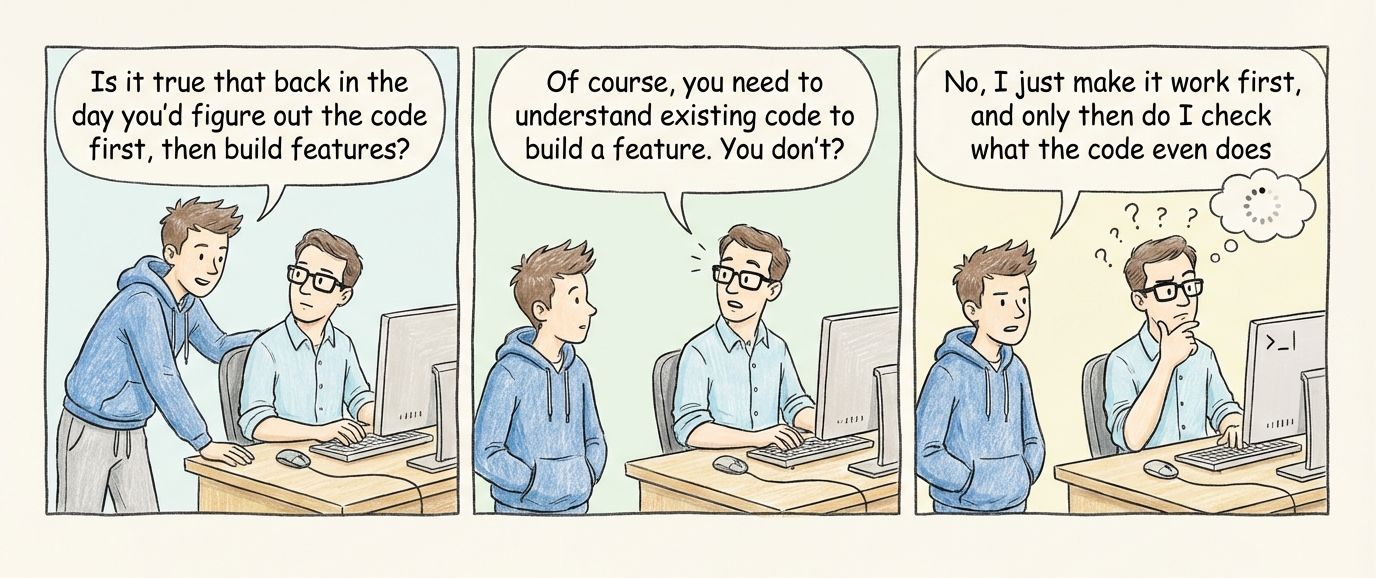

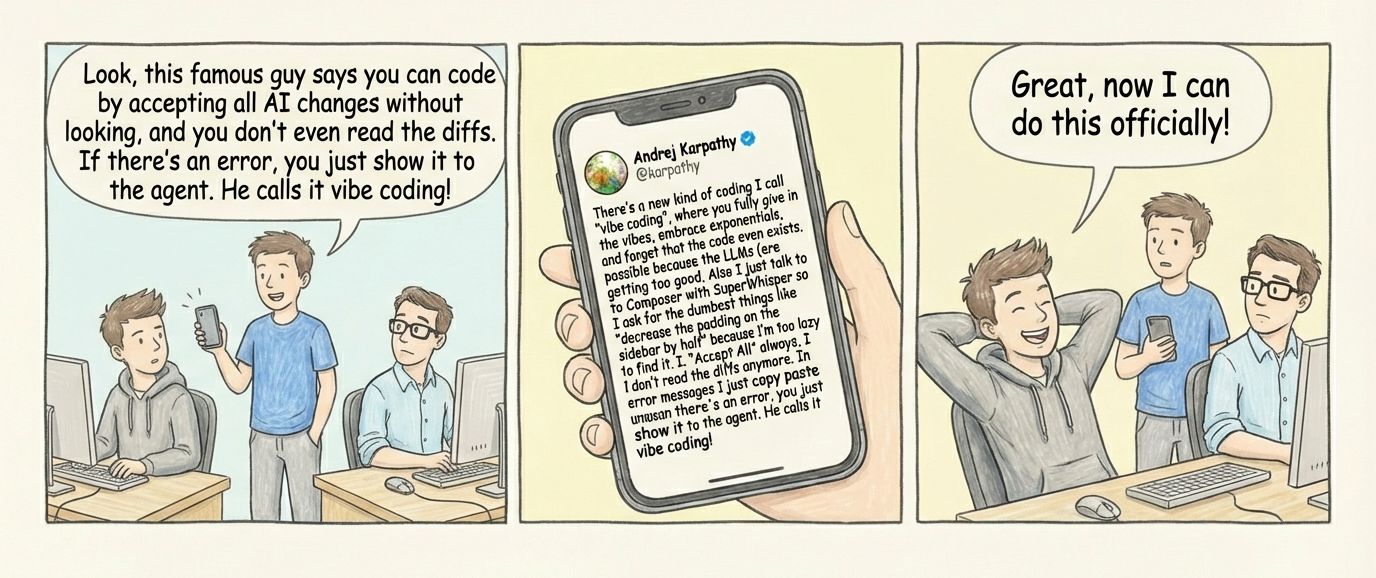

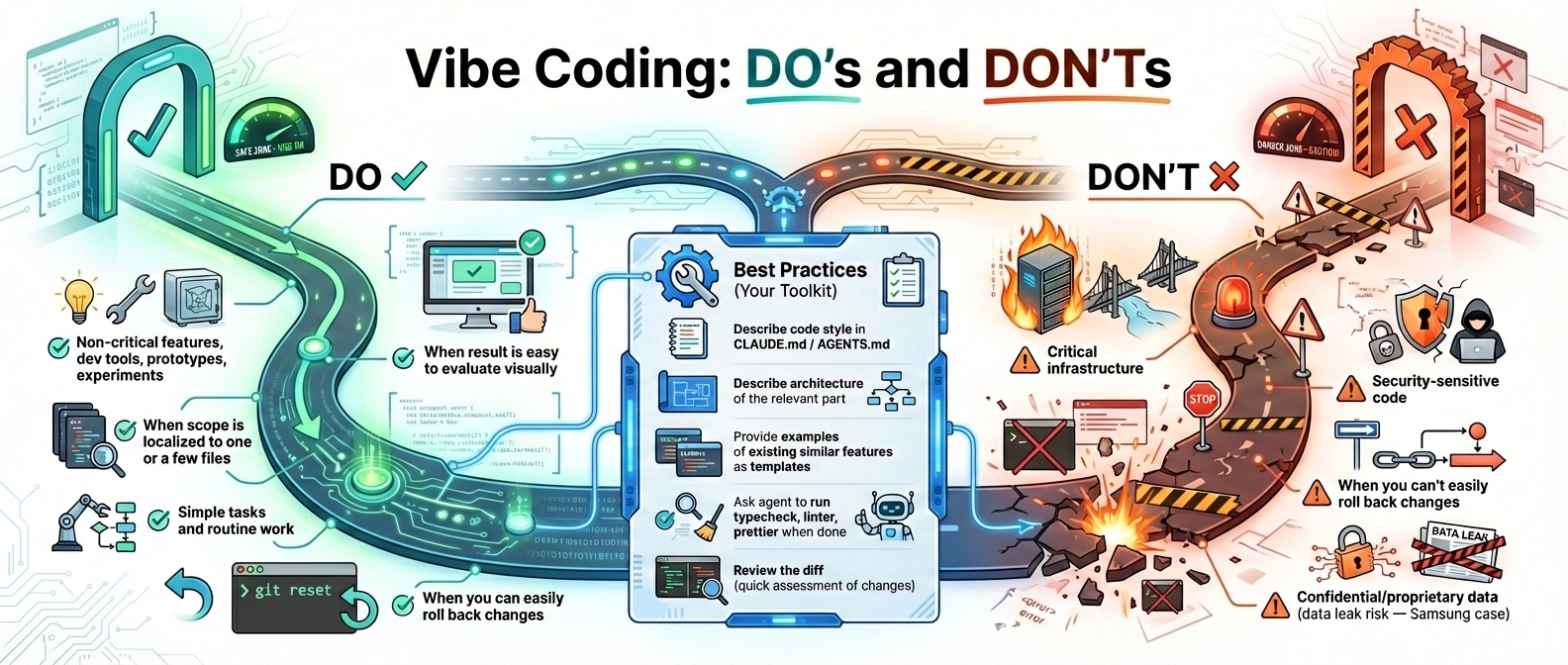

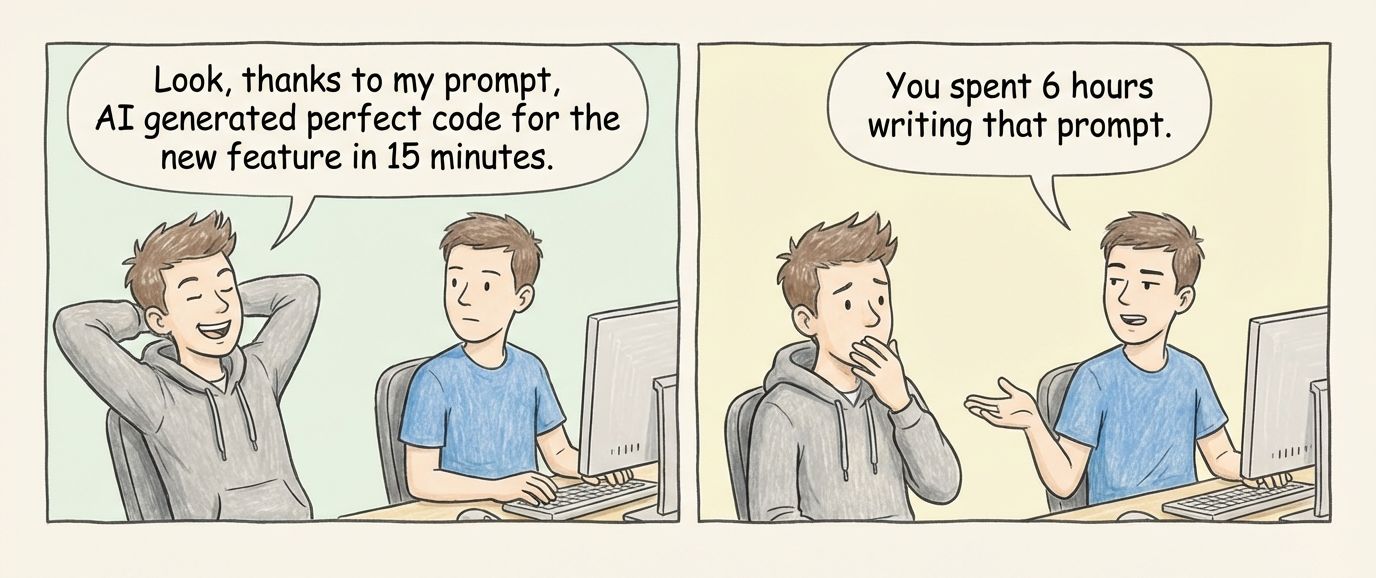

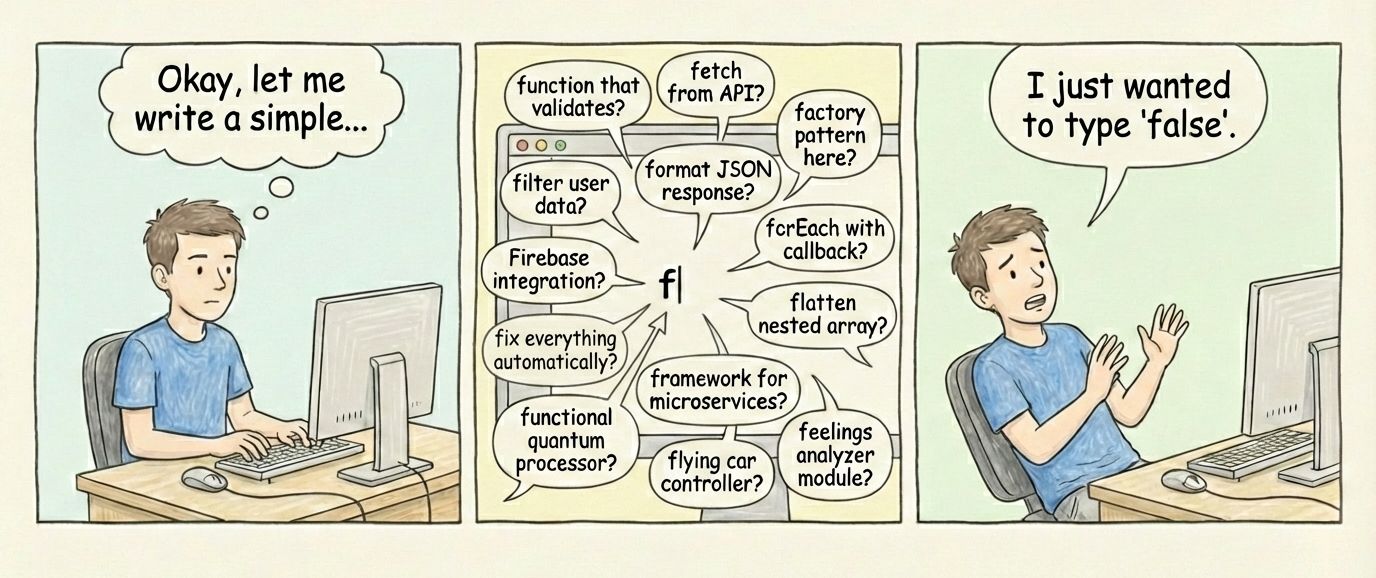

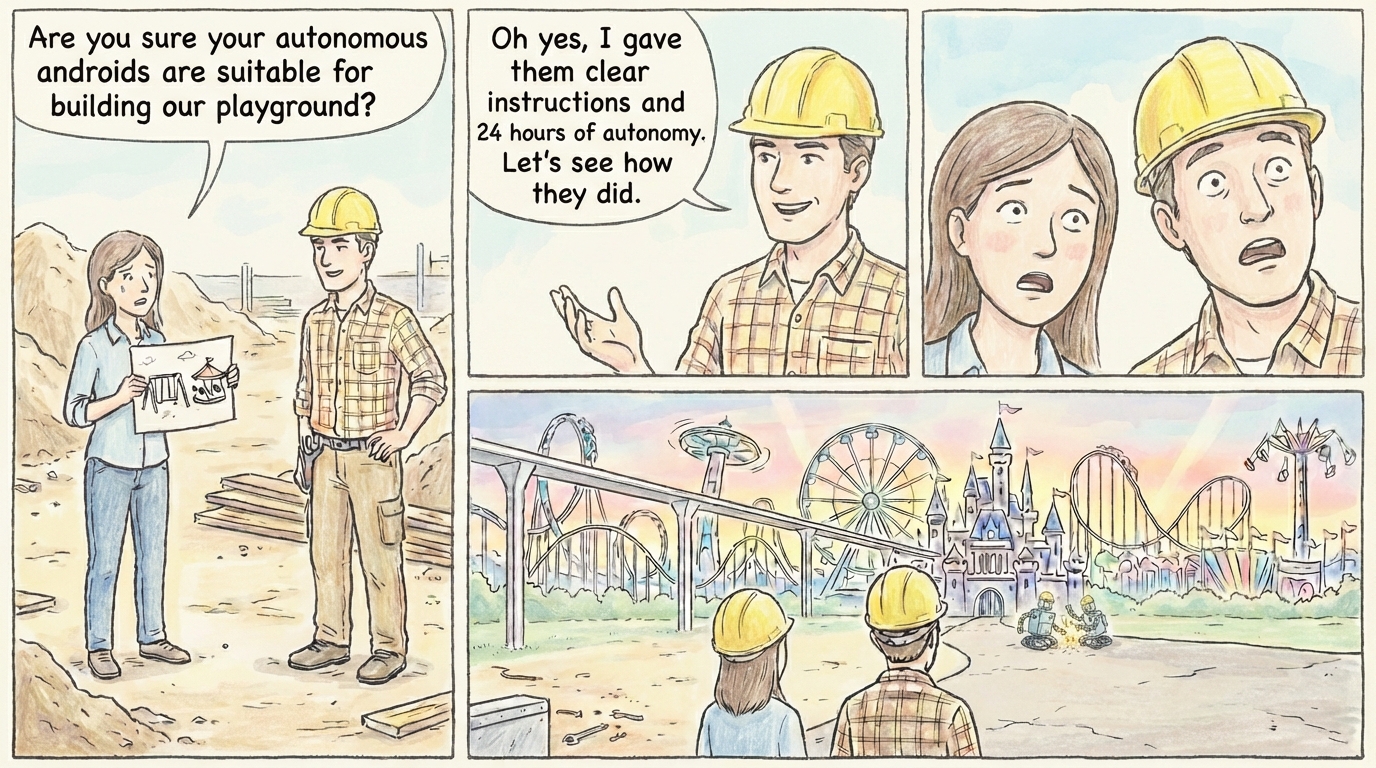

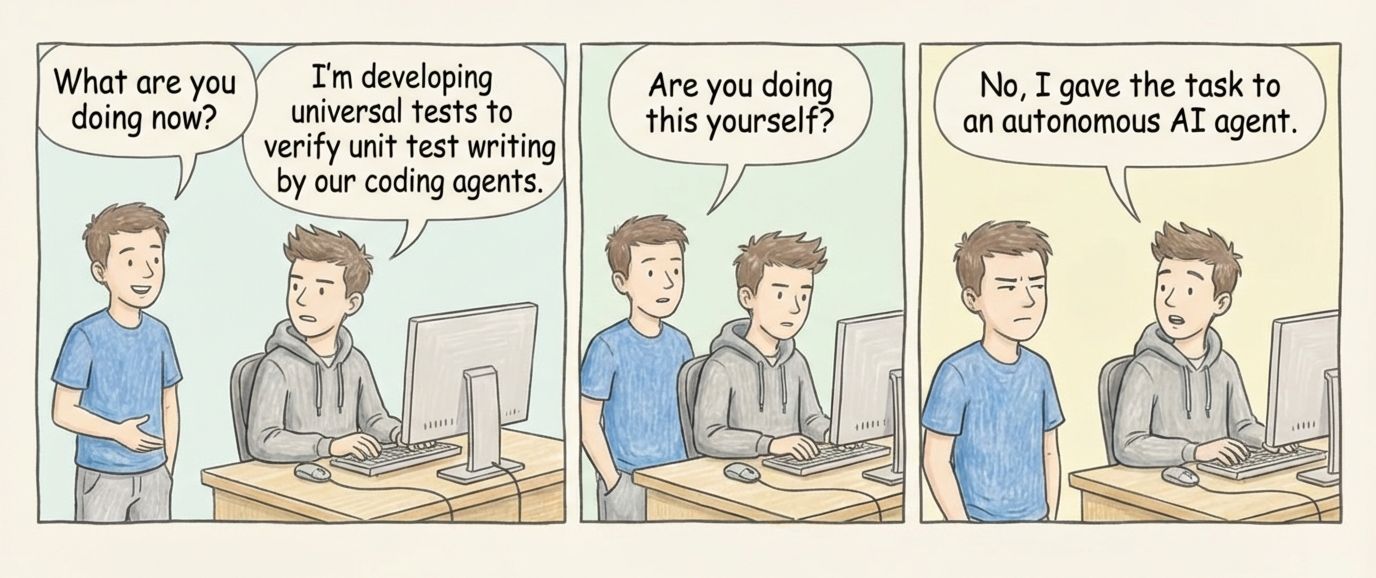

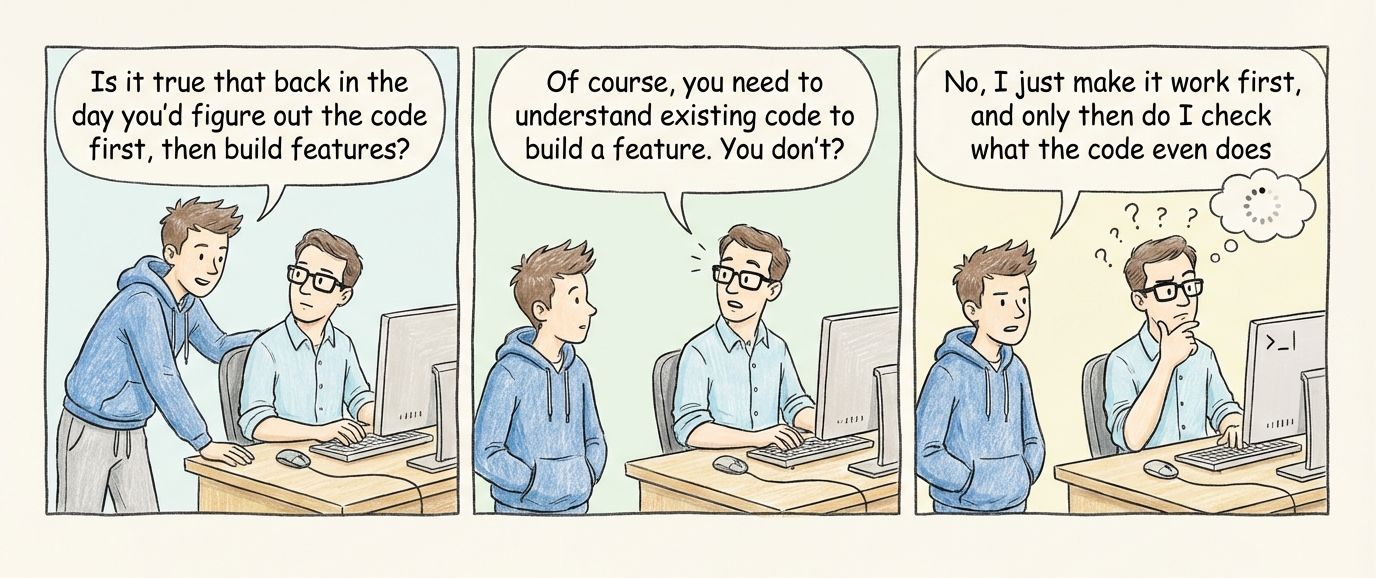

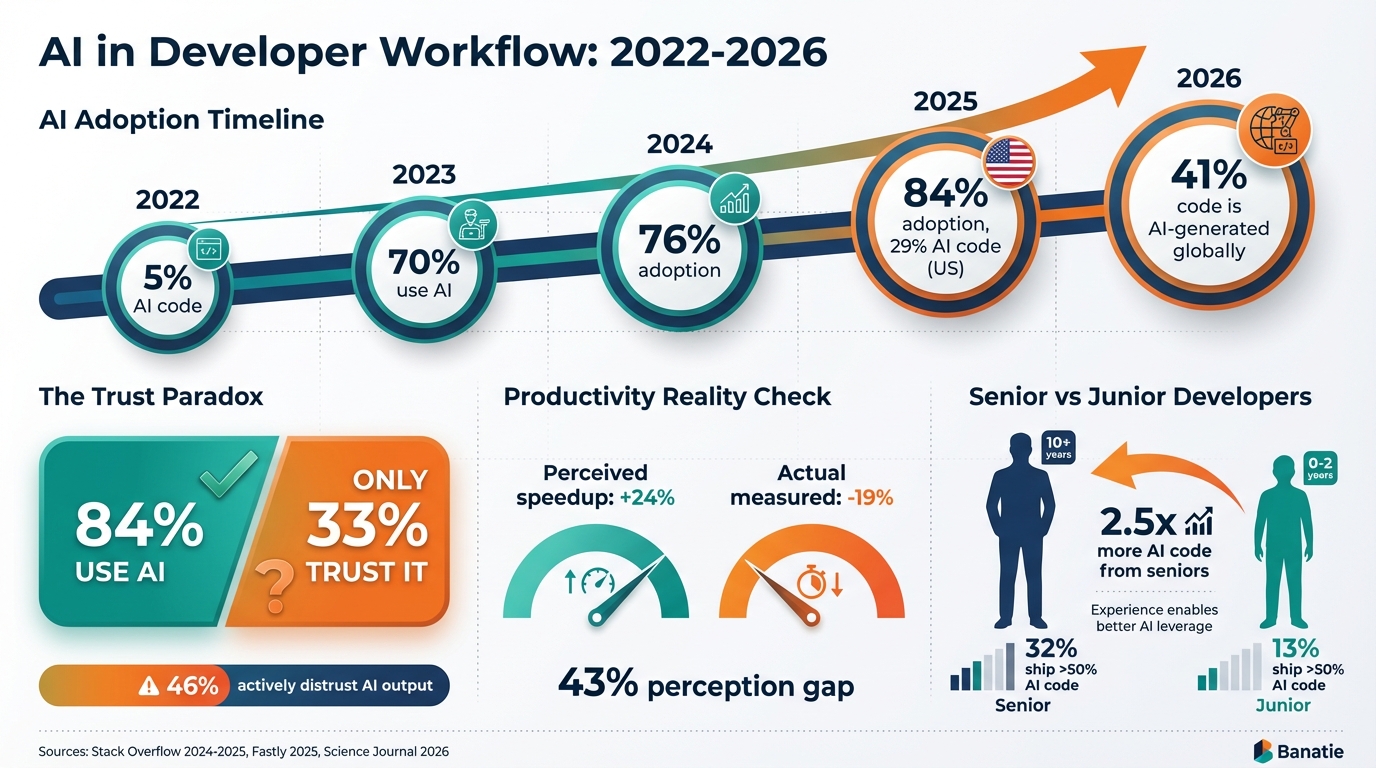

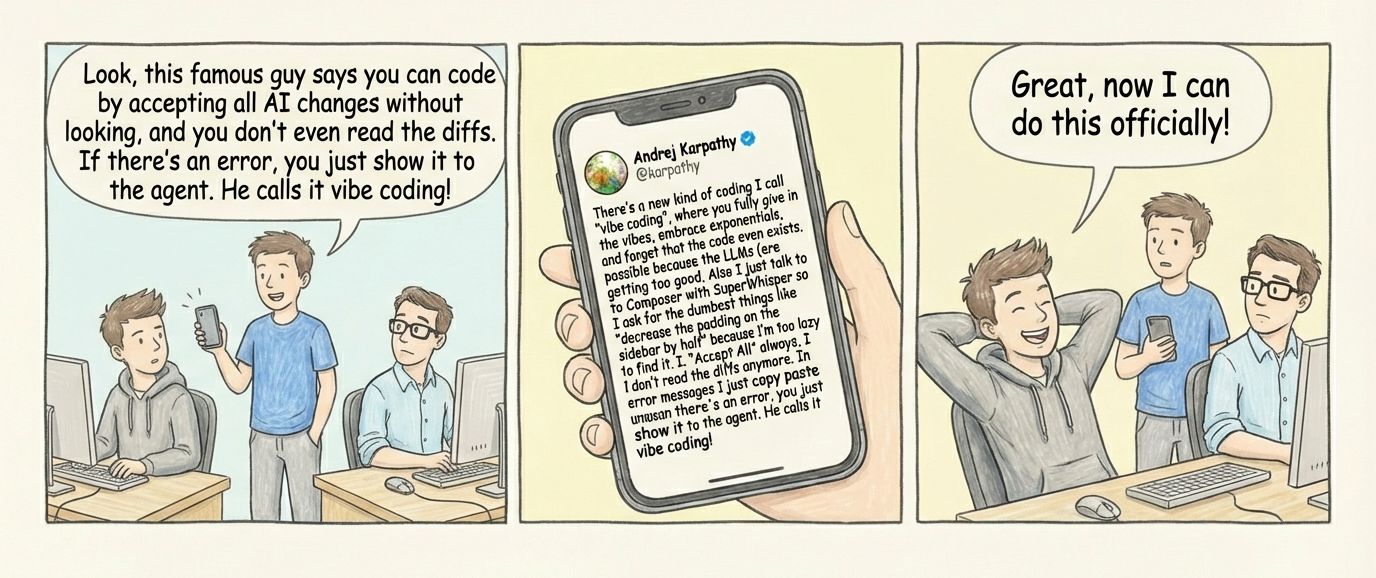

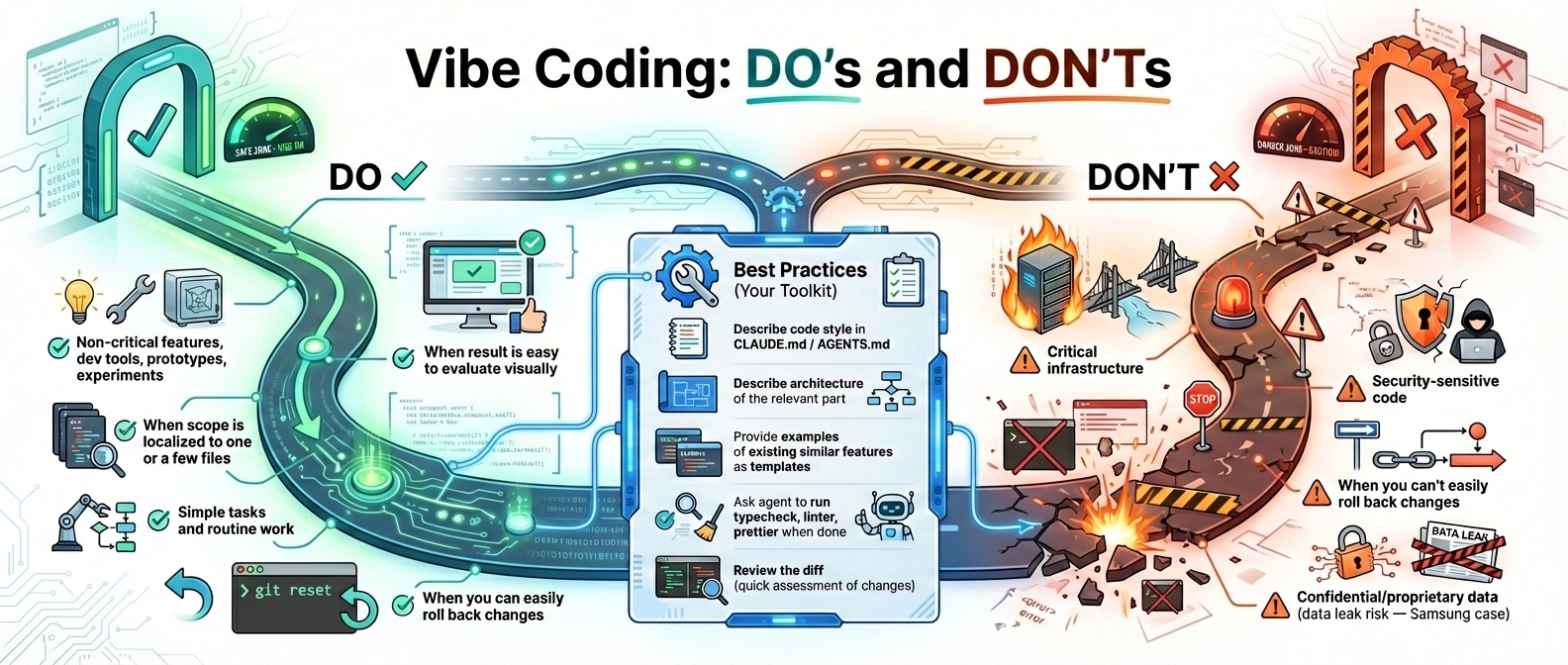

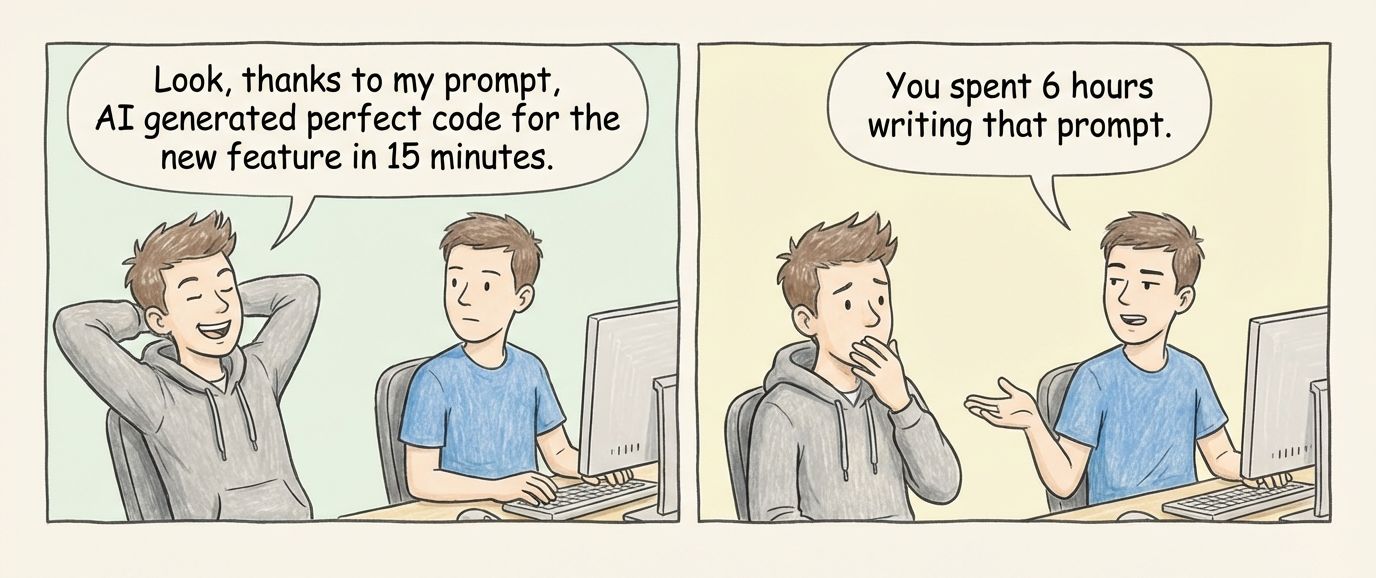

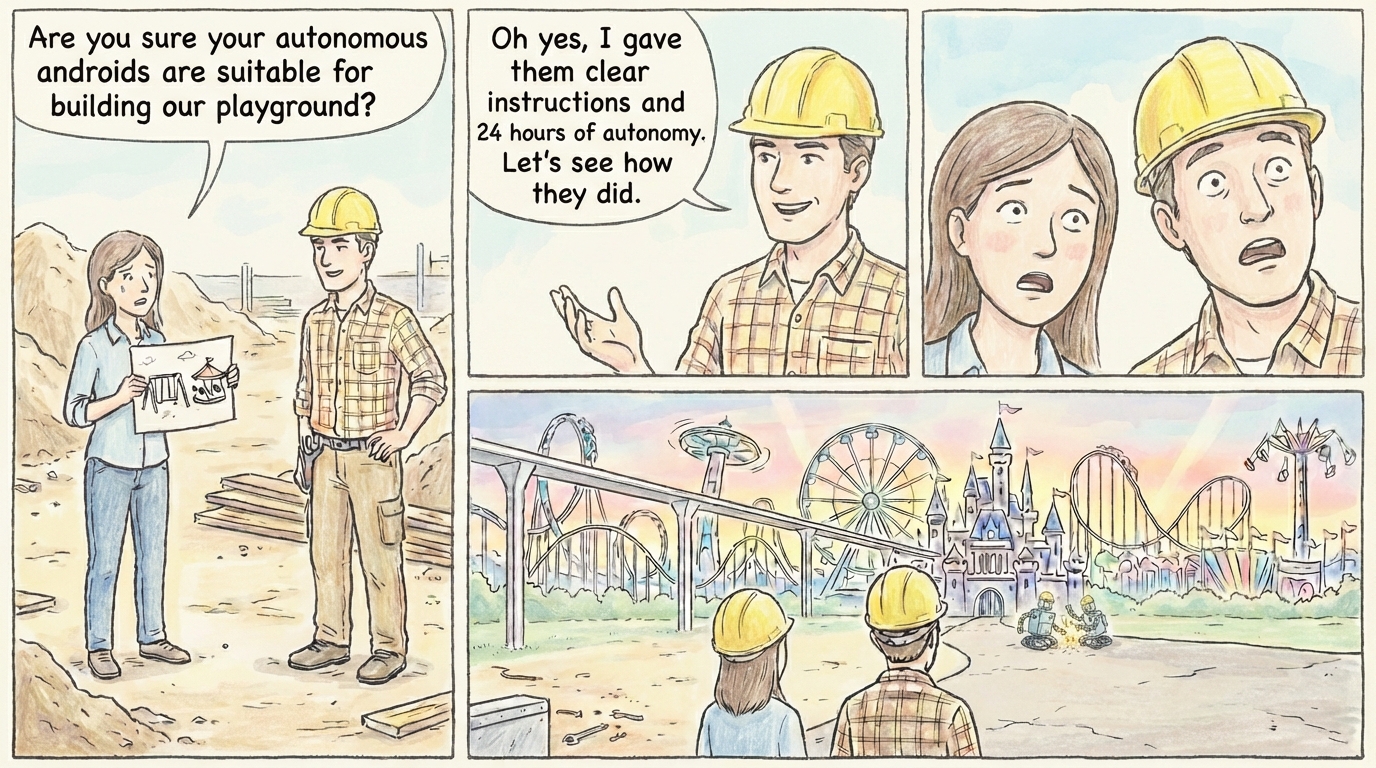

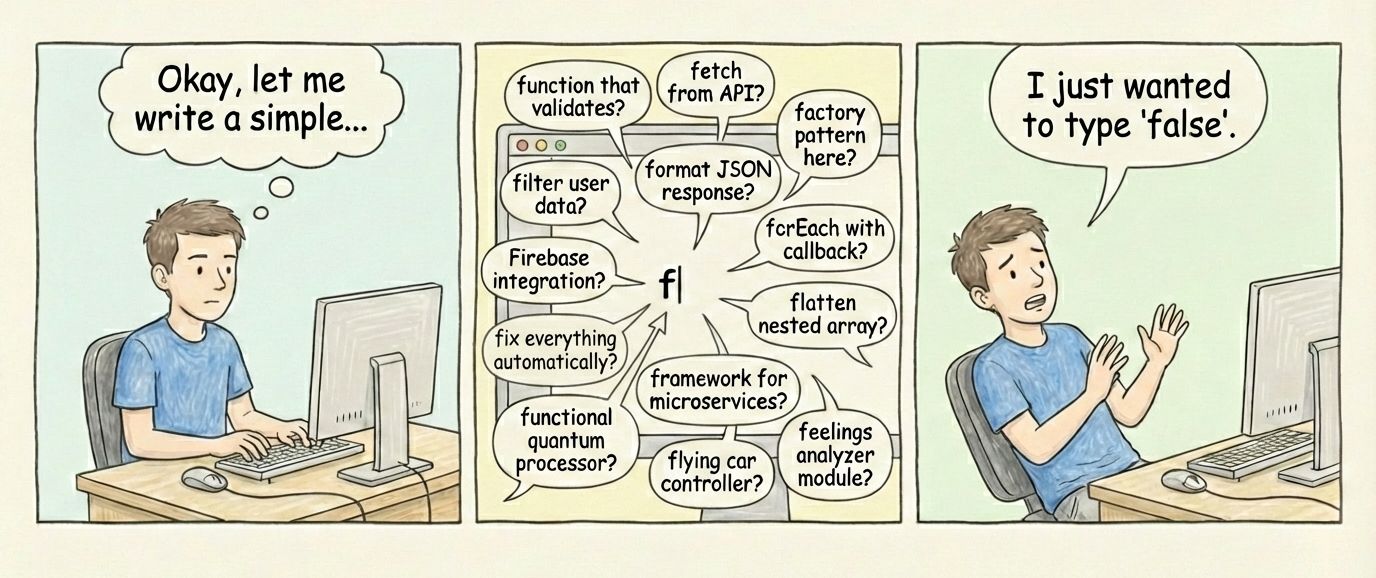

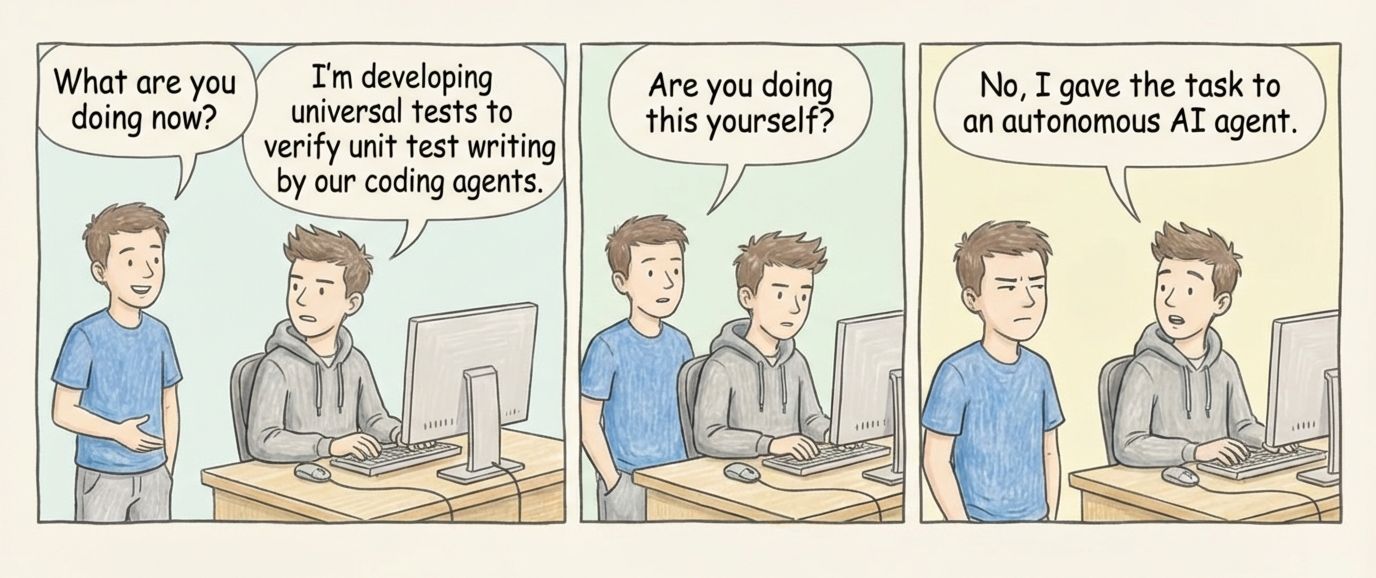

**Concept:** Overview article covering AI coding methodologies landscape. Position vibe coding (Collins Word of Year 2025) as entry point, then survey professional approaches: Spec-Driven Development, Agentic Coding, AI Pair Programming, HITL, TDD+AI.

|

||||

|

||||

**Goal:** Establish Henry's expertise in AI-assisted development. Second article for Dev.to account warmup.

|

||||

|

||||

**Angle:** Survey + practitioner perspective (via interview with Oleg)

|

||||

|

||||

---

|

||||

|

||||

# Brief

|

||||

|

||||

See [brief.md](assets/beyond-vibe-coding/brief.md) for complete strategic context, target reader analysis, content requirements, and success criteria.

|

||||

|

||||

**Quick Summary:**

|

||||

- **Goal:** Fight "AI is for juniors" stigma with data-backed professional methodologies survey

|

||||

- **Angle:** Seniors use AI MORE than juniors — methodology separates pros from beginners

|

||||

- **Format:** Survey of 6 methodologies with credentials, practitioner insights

|

||||

- **Target:** 2,500-3,500 words, thought leadership + long-tail SEO

|

||||

|

||||

---

|

||||

|

||||

# Outline

|

||||

|

||||

See [outline.md](assets/beyond-vibe-coding/outline.md) for complete article structure.

|

||||

|

||||

**Tone:** "Here's what exists and here's what I actually do" — landscape survey through practitioner's lens, not prescriptive guide

|

||||

|

||||

**Structure:**

|

||||

- Introduction (400w) — Hook with vibe coding, establish legitimacy question

|

||||

- 6 Methodology sections (400-500w each) — Credentials block, description, Henry's experience (integrated naturally)

|

||||

- Conclusion (450w) — Landscape overview, legitimacy validation with stats, what I use, community invitation

|

||||

|

||||

**Total:** ~2,800 words

|

||||

**Code examples:** 3 (CLAUDE.md spec, .claude/settings.json, TDD test)

|

||||

|

||||

---

|

||||

|

||||

# Validation Status

|

||||

|

||||

**Validated:** 2026-01-23

|

||||

**Validator:** @validator

|

||||

**Verdict:** REVISE → COMPLETE ✅

|

||||

|

||||

See [validation-results.md](assets/beyond-vibe-coding/validation-results.md) for complete validation report.

|

||||

|

||||

**Summary:**

|

||||

- ✅ **4 claims fully verified:** Senior/junior AI usage, 76% adoption, 27% bans, Ralph Loop virality

|

||||

- ✅ **Security vulnerabilities claim updated:** Added source citations [1][2][3]

|

||||

- ✅ **Removed false claims:** "359x growth" for SDD, "90% Fortune 100 Copilot adoption"

|

||||

- ✅ **Minor stat correction:** "33%" → "about a third" for senior developers

|

||||

|

||||

**Revisions Applied by @architect:**

|

||||

1. Removed Claim 4 (90% Fortune 100) from Conclusion section

|

||||

2. Removed Claim 6 (359x growth) from Spec-Driven credentials, replaced with qualitative description

|

||||

3. Added source citations for Claim 3 (security vulnerabilities): Georgetown CSET, Veracode, industry reports

|

||||

4. Updated Claim 1 to "about a third" instead of "33%" in Introduction and Conclusion

|

||||

|

||||

**Next Step:** Ready for @writer to create Draft

|

||||

|

||||

---

|

||||

|

||||

# Assets Index

|

||||

|

||||

All working files for this article:

|

||||

|

||||

## Core Files

|

||||

|

||||

| File | Purpose | Status |

|

||||

|------|---------|--------|

|

||||

| [brief.md](assets/beyond-vibe-coding/brief.md) | Complete Brief: strategic context, target reader, requirements, success criteria | ✅ Complete |

|

||||

| [outline.md](assets/beyond-vibe-coding/outline.md) | Article structure with word budgets | ✅ Revised & Complete |

|

||||

| [text.md](assets/beyond-vibe-coding/text.md) | Article draft (English) | ✅ Draft complete |

|

||||

| [text-rus.md](assets/beyond-vibe-coding/text-rus.md) | Article draft (Russian) | ✅ Complete |

|

||||

| [interview.md](assets/beyond-vibe-coding/interview.md) | Oleg's practitioner insights — source for Henry's voice | ✅ Complete |

|

||||

| [log-chat.md](assets/beyond-vibe-coding/log-chat.md) | Activity log and agent comments | ⏳ Active |

|

||||

| [seo-metadata.md](assets/beyond-vibe-coding/seo-metadata.md) | SEO title, description, keywords | ⏳ Pending @seo |

|

||||

|

||||

## Methodology Specs

|

||||

|

||||

Detailed research for each methodology — use for expanding credentials in text.md:

|

||||

|

||||

| File | Methodology | Key Sources |

|

||||

|------|-------------|-------------|

|

||||

| [spec-driven-dev.md](assets/beyond-vibe-coding/spec-driven-dev.md) | Spec-Driven Development | GitHub Spec Kit, AWS Kiro, Tessl, Martin Fowler |

|

||||

| [agentic-coding.md](assets/beyond-vibe-coding/agentic-coding.md) | Agentic Coding + Ralph Loop | arXiv papers, Geoffrey Huntley, Cursor 2.0, GitHub Copilot Agent Mode |

|

||||

| [ai-pair-programming.md](assets/beyond-vibe-coding/ai-pair-programming.md) | AI Pair Programming | GitHub Copilot official, Microsoft Learn, Cursor, Windsurf |

|

||||

| [ai-aided-test-first.md](assets/beyond-vibe-coding/ai-aided-test-first.md) | TDD + AI | Thoughtworks Radar, Kent Beck, DORA Report 2025, Builder.io |

|

||||

|

||||

## Statistics & Research

|

||||

|

||||

| File | Purpose | Status |

|

||||

|------|---------|--------|

|

||||

| [ai-usage-statistics.md](assets/beyond-vibe-coding/ai-usage-statistics.md) | Statistical research: AI adoption by seniority, company policies, security concerns | ✅ Complete |

|

||||

| [ai-adoption-statistics.md](assets/beyond-vibe-coding/ai-adoption-statistics.md) | LaTeX-formatted statistics for infographics (2024-2026 data) | ✅ Complete |

|

||||

| [research-index.md](assets/beyond-vibe-coding/research-index.md) | Methodology clusters, verified sources, interview questions | ⏳ Needs update |

|

||||

| [validation-results.md](assets/beyond-vibe-coding/validation-results.md) | Fact-checking results for all statistical claims | ✅ Complete |

|

||||

|

||||

## Images

|

||||

|

||||

| Folder | Contents | Status |

|

||||

|--------|----------|--------|

|

||||

| [images/comic/](assets/beyond-vibe-coding/images/comic/) | 8 comic illustrations, uploaded to CDN | ✅ Ready |

|

||||

| [images/infographic/](assets/beyond-vibe-coding/images/infographic/) | Infographics (based on ai-adoption-statistics.md) | ⏳ In progress |

|

||||

| [images/comic/cdn-urls.md](assets/beyond-vibe-coding/images/comic/cdn-urls.md) | CDN URLs for all comic images | ✅ Complete |

|

||||

|

||||

## External Research

|

||||

|

||||

| File | Purpose |

|

||||

|------|---------|

|

||||

| [perplexity-chats/AI-Assisted Development_...](research/perplexity-chats/) | Original Perplexity research on terminology |

|

||||

|

||||

---

|

||||

|

||||

# TODO: Part 4 (Potential Future Addition)

|

||||

|

||||

Consider adding a fourth part to the series covering additional methodologies:

|

||||

|

||||

| Methodology | Description | Status |

|

||||

|-------------|-------------|--------|

|

||||

| Architecture-First AI Development | Design patterns and system architecture before AI implementation | ⏳ Needs research |

|

||||

| Prompt-Driven Development | Structured prompt engineering as development methodology | ⏳ Needs research |

|

||||

| Copy-pasting from AI chatbot | Manual workflow — baseline to compare other methods against | ⏳ Needs research |

|

||||

|

||||

**Rationale:** These approaches represent common patterns not covered in Parts 1-3:

|

||||

- Architecture-First — enterprise/complex systems angle

|

||||

- Prompt-Driven — bridges gap between vibe coding and spec-driven

|

||||

- Copy-pasting — the "default" many developers start with, important baseline

|

||||

|

||||

**Next steps:**

|

||||

1. Research each methodology for credentials and sources

|

||||

2. Conduct interview with Oleg for Henry's perspective

|

||||

3. Assess if volume/interest justifies a Part 4

|

||||

|

||||

---

|

||||

|

||||

# Activity Log

|

||||

|

||||

See [log-chat.md](assets/beyond-vibe-coding/log-chat.md)

|

||||

|

||||

**Latest:** @writer completed draft (2026-01-24). 2,650 words, 8 image placeholders for @image agent. No code snippets per user request.

|

||||

|

|

@ -1,67 +0,0 @@

|

|||

---

|

||||

slug: midjourney-alternatives-bn-blog

|

||||

title: "Best Midjourney Alternatives in 2026"

|

||||

author: banatie

|

||||

status: drafting

|

||||

created: 2026-01-12

|

||||

updated: 2026-01-13

|

||||

content_type: comparison

|

||||

channel: banatie.app/blog

|

||||

assets_folder: assets/midjourney-alternatives-bn-blog/

|

||||

primary_keyword: "midjourney alternative"

|

||||

primary_volume: 1300

|

||||

primary_kd: 3

|

||||

secondary_keywords:

|

||||

- "midjourney alternatives"

|

||||

- "midjourney api"

|

||||

- "leonardo ai"

|

||||

- "stable diffusion"

|

||||

- "flux ai"

|

||||

- "chatgpt image generator"

|

||||

estimated_traffic: 700-1200

|

||||

---

|

||||

|

||||

# Midjourney Alternatives — Comparison Guide

|

||||

|

||||

## Summary

|

||||

|

||||

Comprehensive comparison of AI image generation tools as Midjourney alternatives. Covers UI-first services, open source options, API-first platforms, and aggregators.

|

||||

|

||||

**Strategic value:** Ultra-low KD (3), solid volume (1,300), quick win for domain authority.

|

||||

|

||||

**Banatie positioning:** API-First Platforms section — developer workflow native with MCP integration.

|

||||

|

||||

---

|

||||

|

||||

# Outline

|

||||

|

||||

See [outline.md](assets/midjourney-alternatives-bn-blog/outline.md)

|

||||

|

||||

---

|

||||

|

||||

# Draft

|

||||

|

||||

See [text.md](assets/midjourney-alternatives-bn-blog/text.md)

|

||||

|

||||

---

|

||||

|

||||

# SEO

|

||||

|

||||

*Pending — waiting for @seo*

|

||||

|

||||

---

|

||||

|

||||

# Activity Log

|

||||

|

||||

See [log-chat.md](assets/midjourney-alternatives-bn-blog/log-chat.md)

|

||||

|

||||

---

|

||||

|

||||

# Assets

|

||||

|

||||

- [brief.md](assets/midjourney-alternatives-bn-blog/brief.md) — full brief with structure and requirements

|

||||

- [research-complete.md](assets/midjourney-alternatives-bn-blog/research-complete.md) — research on 19 services

|

||||

- [outline.md](assets/midjourney-alternatives-bn-blog/outline.md) — article structure

|

||||

- [text.md](assets/midjourney-alternatives-bn-blog/text.md) — article body

|

||||

- [homepages.md](assets/midjourney-alternatives-bn-blog/homepages.md) — homepage screenshots index

|

||||

- [log-chat.md](assets/midjourney-alternatives-bn-blog/log-chat.md) — agent activity log

|

||||

|

|

@ -2,7 +2,7 @@

|

|||

|

||||

## Overview

|

||||

|

||||

This is a **content repository** for Banatie ([banatie.app](https://banatie.app/)) blog and website. Content is created by 10 Claude Desktop agents. You (Claude Code) manage files and structure.

|

||||

This is a **content repository** for Banatie blog and website. Content is created by 10 Claude Desktop agents. You (Claude Code) manage files and structure.

|

||||

|

||||

**Core principle:** One markdown file = one article. Files move between stage folders like kanban cards.

|

||||

|

||||

|

|

|

|||

|

|

@ -1,20 +0,0 @@

|

|||

# Activity Log

|

||||

|

||||

## 2025-01-22 @strategist

|

||||

|

||||

**Action:** Initial setup, research intake

|

||||

|

||||

**Changes:**

|

||||

- Created article card in `0-inbox/`

|

||||

- Created assets folder structure

|

||||

- Copied Perplexity research to `perplexity-terminology-research.md`

|

||||

- Created `research-index.md` for methodology clustering

|

||||

|

||||

**Notes:**

|

||||

- Goal: warm-up article for Henry's Dev.to account

|

||||

- Approach: survey + personal opinion through interview with Oleg

|

||||

- Need to verify all source links before using

|

||||

|

||||

**For next agent:** @strategist continues with research clustering and interview

|

||||

|

||||

---

|

||||

|

|

@ -1,3 +0,0 @@

|

|||

# Outline

|

||||

|

||||

*pending — will be created after research clustering and interview*

|

||||

|

|

@ -1,423 +0,0 @@

|

|||

# AI-Assisted Development: Кластеризованная терминология и подходы

|

||||

|

||||

**Source:** Perplexity Research, January 2026

|

||||

**Original file:** `/research/perplexity-chats/AI-Assisted Development_ Кластеризованная терминол.md`

|

||||

|

||||

---

|

||||

|

||||

## Domain 1: Experimental & Low-Quality Approaches

|

||||

|

||||

### Vibe Coding

|

||||

|

||||

**Authority Rank: 1** | **Perception: Negative**

|

||||

|

||||

**Sources:**

|

||||

1. **Andrej Karpathy** (OpenAI co-founder, Tesla AI Director) — Wikipedia, Feb 2025

|

||||

2. **Collins English Dictionary** — Word of the Year 2025

|

||||

3. **SonarSource** (Code Quality Platform) — code quality analysis

|

||||

|

||||

**Description:**

|

||||

Term coined by Andrej Karpathy in February 2025, quickly became cultural phenomenon — Collins English Dictionary named it Word of Year 2025. Approach where developer describes task in natural language, AI generates code, but key distinction: **developer does NOT review code**, only looks at execution results.

|

||||

|

||||

Simon Willison quote: "If LLM wrote every line of your code, but you reviewed, tested and understood it all — that's not vibe coding, that's using LLM as typing assistant". Key characteristic: accepting AI-generated code without understanding it.

|

||||

|

||||

Critics point to lack of accountability, maintainability problems, increased security vulnerability risk. May 2025: Swedish app Lovable (using vibe coding) had security vulnerabilities in 170 of 1,645 created web apps. Fast Company September 2025 reported "vibe coding hangover" — senior engineers cite "development hell" working with such code.

|

||||

|

||||

Suitable for "throwaway weekend projects" as Karpathy originally intended, but risky for production systems.

|

||||

|

||||

**Links:**

|

||||

- https://en.wikipedia.org/wiki/Vibe_coding

|

||||

- https://www.sonarsource.com/resources/library/vibe-coding/

|

||||

|

||||

---

|

||||

|

||||

## Domain 2: Enterprise & Production-Grade Methodologies

|

||||

|

||||

### AI-Driven Development Life Cycle (AI-DLC)

|

||||

|

||||

**Authority Rank: 1** | **Perception: Positive - Enterprise**

|

||||

|

||||

**Sources:**

|

||||

1. **AWS** — Raja SP, Principal Solutions Architect, July 2025

|

||||

2. **Amazon Q Developer & Kiro** — official AWS platform

|

||||

|

||||

**Description:**

|

||||

Presented by AWS July 2025 as transformative enterprise methodology. Raja SP created AI-DLC with team after working with 100+ large customers.

|

||||

|

||||

AI as **central collaborator** throughout SDLC with two dimensions:

|

||||

1. **AI-Powered Execution with Human Oversight** — AI creates detailed work plans, actively requests clarifications, defers critical decisions to humans

|

||||

2. **Dynamic Team Collaboration** — while AI handles routine tasks, teams unite for real-time problem solving

|

||||

|

||||

**Three phases:** Inception (Mob Elaboration), Construction (Mob Construction), Operations

|

||||

|

||||

**Terminology:** "sprints" → "bolts" (hours/days instead of weeks); Epics → Units of Work

|

||||

|

||||

**Link:** https://aws.amazon.com/blogs/devops/ai-driven-development-life-cycle/

|

||||

|

||||

### Spec-Driven Development (SDD)

|

||||

|

||||

**Authority Rank: 2** | **Perception: Positive - Systematic**

|

||||

|

||||

**Sources:**

|

||||

1. **GitHub Engineering** — Den Delimarsky, Spec Kit toolkit, September 2025

|

||||

2. **ThoughtWorks Technology Radar** — November 2025

|

||||

3. **Red Hat Developers** — October 2025

|

||||

|

||||

**Description:**

|

||||

Emerged 2025 as direct response to "vibe coding" problems. ThoughtWorks included in Technology Radar. GitHub open-sourced Spec Kit September 2025, supports Claude Code, GitHub Copilot, Gemini CLI.

|

||||

|

||||

**Key principle:** specification becomes source of truth, not code.

|

||||

|

||||

**Spec Kit Workflow:**

|

||||

1. **Constitution** — immutable high-level principles (rules file)

|

||||

2. **/specify** — create specification from high-level prompt

|

||||

3. **/plan** — technical planning based on specification

|

||||

4. **/tasks** — break down into manageable phased parts

|

||||

|

||||

**Three interpretations:** Spec-first, Spec-anchored, Spec-as-source

|

||||

|

||||

**Tools:** Amazon Kiro, GitHub Spec Kit, Tessl Framework

|

||||

|

||||

**Links:**

|

||||

- https://github.blog/ai-and-ml/generative-ai/spec-driven-development-with-ai-get-started-with-a-new-open-source-toolkit/

|

||||

- https://www.thoughtworks.com/radar/techniques/spec-driven-development

|

||||

- https://martinfowler.com/articles/exploring-gen-ai/sdd-3-tools.html

|

||||

- https://developer.microsoft.com/blog/spec-driven-development-spec-kit

|

||||

|

||||

### Architecture-First AI Development

|

||||

|

||||

**Authority Rank: 3** | **Perception: Positive - Professional/Mature**

|

||||

|

||||

**Sources:**

|

||||

1. **WaveMaker** — Vikram Srivats (CCO), Prashant Reddy (Head of AI Product Engineering), January 2026

|

||||

2. **ITBrief Industry Analysis** — January 2026

|

||||

|

||||

**Description:**

|

||||

2026 industry shift. Quote Vikram Srivats: "Second coming of AI coding tools must be all about **Architectural Intelligence** — just Artificial Intelligence no longer fits."

|

||||

|

||||

Shift from "vibe coding" experiments to governance, architecture alignment, long-term maintainability.

|

||||

|

||||

**Key characteristics:**

|

||||

- System design before implementation

|

||||

- AI agents with clear roles: Architect, Builder, Guardian

|

||||

- Coding architectural rules, enforcement review processes

|

||||

- Working from formal specifications

|

||||

- Respect for internal organizational standards

|

||||

|

||||

**Links:**

|

||||

- https://itbrief.co.uk/story/ai-coding-tools-face-2026-reset-towards-architecture

|

||||

- https://itbrief.news/story/ai-coding-tools-face-2026-reset-towards-architecture

|

||||

|

||||

---

|

||||

|

||||

## Domain 3: Quality & Validation-Focused Approaches

|

||||

|

||||

### Test-Driven Development with AI (TDD-AI)

|

||||

|

||||

**Authority Rank: 1** | **Perception: Positive - Quality-Focused**

|

||||

|

||||

**Sources:**

|

||||

1. **Galileo AI Research** — August 2025

|

||||

2. **Builder.io Engineering** — August 2025

|

||||

|

||||

**Description:**

|

||||

Traditional TDD adapted for AI systems. Tests written first → AI generates code to pass tests → verify → refactor.

|

||||

|

||||

Statistical testing for non-deterministic AI outputs — critical distinction from traditional TDD.

|

||||

|

||||

**Links:**

|

||||

- https://galileo.ai/blog/tdd-ai-system-architecture

|

||||

- https://galileo.ai/blog/test-driven-development-ai-systems

|

||||

- https://www.builder.io/blog/test-driven-development-ai

|

||||

|

||||

### Human-in-the-Loop (HITL) AI Development

|

||||

|

||||

**Authority Rank: 2** | **Perception: Positive - Responsible**

|

||||

|

||||

**Sources:**

|

||||

1. **Google Cloud Documentation** — 2026

|

||||

2. **Encord Research** — December 2024

|

||||

3. **Atlassian Engineering** — HULA framework, September 2025

|

||||

|

||||

**Description:**

|

||||

Humans actively involved in AI system lifecycle. Continuous feedback and validation loops. Hybrid approach: human judgment + AI execution.

|

||||

|

||||

**HULA (Human-in-the-Loop AI)** — Atlassian framework for software development agents.

|

||||

|

||||

**Links:**

|

||||

- https://cloud.google.com/discover/human-in-the-loop

|

||||

- https://encord.com/blog/human-in-the-loop-ai/

|

||||

- https://www.atlassian.com/blog/atlassian-engineering/hula-blog-autodev-paper-human-in-the-loop-software-development-agents

|

||||

|

||||

### Quality-First AI Coding

|

||||

|

||||

**Authority Rank: 3** | **Perception: Positive - Professional**

|

||||

|

||||

**Sources:**

|

||||

1. **Qodo.ai** (formerly CodiumAI) — December 2025

|

||||

|

||||

**Description:**

|

||||

Code integrity at core. Qodo.ai — platform with agentic AI code generation and comprehensive testing.

|

||||

|

||||

Production-ready focus: automatic test generation for every code change. Direct contrast to "vibe coding" — quality non-negotiable.

|

||||

|

||||

**Link:** https://www.qodo.ai/ai-code-review-platform/

|

||||

|

||||

### Deterministic AI Development

|

||||

|

||||

**Authority Rank: 4** | **Perception: Positive - Enterprise/Compliance**

|

||||

|

||||

**Source:** Augment Code Research — August 2025

|

||||

|

||||

**Description:**

|

||||

Identical outputs for identical inputs. Rule-based architectures for predictability. Best for: security scanning, compliance checks, refactoring tasks.

|

||||

|

||||

Hybrid approach: probabilistic reasoning + deterministic execution.

|

||||

|

||||

**Link:** https://www.augmentcode.com/guides/deterministic-ai-for-predictable-coding

|

||||

|

||||

---

|

||||

|

||||

## Domain 4: Collaborative Development Patterns

|

||||

|

||||

### AI Pair Programming

|

||||

|

||||

**Authority Rank: 1** | **Perception: Positive - Collaborative**

|

||||

|

||||

**Sources:**

|

||||

1. **GitHub Copilot (Microsoft)** — January 2026

|

||||

2. **Qodo.ai Documentation** — March 2025

|

||||

3. **GeeksforGeeks** — July 2025

|

||||

|

||||

**Description:**

|

||||

AI as "pair programmer" or coding partner. Based on traditional pair programming: driver (human/AI) and navigator (human/AI) roles.

|

||||

|

||||

Real-time collaboration and feedback. Tools: GitHub Copilot, Cursor, Windsurf.

|

||||

|

||||

**Links:**

|

||||

- https://code.visualstudio.com/docs/copilot/overview

|

||||

- https://www.qodo.ai/glossary/pair-programming/

|

||||

- https://www.geeksforgeeks.org/artificial-intelligence/what-is-ai-pair-programming/

|

||||

- https://graphite.com/guides/ai-pair-programming-best-practices

|

||||

|

||||

### Mobbing with AI / Mob Programming with AI

|

||||

|

||||

**Authority Rank: 2** | **Perception: Positive - Team-Focused**

|

||||

|

||||

**Sources:**

|

||||

1. **Atlassian Engineering Blog** — December 2025

|

||||

2. **Aaron Griffith** — January 2025 (YouTube)

|

||||

|

||||

**Description:**

|

||||

Entire team works together, AI as driver. AI generates code/tests in front of team. Team navigates, reviews, refines in real-time.

|

||||

|

||||

Best for: complex problems, knowledge transfer, quality assurance.

|

||||

|

||||

**Links:**

|

||||

- https://www.atlassian.com/blog/atlassian-engineering/mobbing-with-ai

|

||||

- https://www.youtube.com/watch?v=BsFPbYX4WXQ

|

||||

|

||||

### Agentic Coding / Agentic Programming

|

||||

|

||||

**Authority Rank: 3** | **Perception: Positive - Advanced**

|

||||

|

||||

**Sources:**

|

||||

1. **arXiv Research Paper** — August 2025

|

||||

2. **AI Accelerator Institute** — February 2025

|

||||

3. **Apiiro Security Platform** — September 2025

|

||||

|

||||

**Description:**

|

||||

LLM-based agents autonomously plan, execute, improve development tasks. Beyond code completion: generates programs, diagnoses bugs, writes tests, refactors.

|

||||

|

||||

Key properties: **autonomy, interactive, iterative refinement, goal-oriented**.

|

||||

|

||||

Agent behaviors: planning, memory management, tool integration, execution monitoring.

|

||||

|

||||

**Links:**

|

||||

- https://arxiv.org/html/2508.11126v1

|

||||

- https://www.aiacceleratorinstitute.com/agentic-code-generation-the-future-of-software-development/

|

||||

- https://apiiro.com/glossary/agentic-coding/

|

||||

|

||||

---

|

||||

|

||||

## Domain 5: Workflow & Process Integration

|

||||

|

||||

### Prompt-Driven Development (PDD)

|

||||

|

||||

**Authority Rank: 1** | **Perception: Neutral to Positive**

|

||||

|

||||

**Sources:**

|

||||

1. **Capgemini Software Engineering** — May 2025

|

||||

2. **Hexaware Technologies** — August 2025

|

||||

|

||||

**Description:**

|

||||

Developer breaks requirements into series of prompts. LLM generates code for each prompt. **Critical:** developer MUST review LLM-generated code.

|

||||

|

||||

Critical distinction from vibe coding: code review mandatory.

|

||||

|

||||

**Links:**

|

||||

- https://capgemini.github.io/ai/prompt-driven-development/

|

||||

- https://hexaware.com/blogs/prompt-driven-development-coding-in-conversation/

|

||||

|

||||

### AI-Augmented Development

|

||||

|

||||

**Authority Rank: 2** | **Perception: Positive - Practical**

|

||||

|

||||

**Sources:**

|

||||

1. **GitLab Official Documentation** — December 2023

|

||||

2. **Virtusa** — January 2024

|

||||

|

||||

**Description:**

|

||||

AI tools accelerate SDLC across all phases. Focus: code generation, bug detection, automated testing, smart documentation.

|

||||

|

||||

Key principle: humans handle strategy, AI handles execution.

|

||||

|

||||

**Links:**

|

||||

- https://about.gitlab.com/topics/agentic-ai/ai-augmented-software-development/

|

||||

- https://www.virtusa.com/digital-themes/ai-augmented-development

|

||||

|

||||

### Copilot-Driven Development

|

||||

|

||||

**Authority Rank: 3** | **Perception: Positive - Practical**

|

||||

|

||||

**Sources:**

|

||||

1. **GitHub/Microsoft Official** — January 2026

|

||||

2. **Emergn** — September 2025

|

||||

|

||||

**Description:**

|

||||

Specifically using GitHub Copilot or similar tools as development partner (not just assistant).

|

||||

|

||||

Context-aware, learns coding style. Enables conceptual focus instead of mechanical typing.

|

||||

|

||||

**Links:**

|

||||

- https://code.visualstudio.com/docs/copilot/overview

|

||||

- https://www.emergn.com/insights/how-ai-tools-impact-the-way-we-develop-software-our-github-copilot-journey/

|

||||

|

||||

### Conversational Coding

|

||||

|

||||

**Authority Rank: 4** | **Perception: Neutral to Positive**

|

||||

|

||||

**Sources:**

|

||||

1. **Google Cloud Platform** — January 2026

|

||||

2. **arXiv Research** — March 2025

|

||||

|

||||

**Description:**

|

||||

Natural language interaction with AI for development. Iterative, dialogue-based approach. Context retention across sessions.

|

||||

|

||||

**Links:**

|

||||

- https://cloud.google.com/conversational-ai

|

||||

- https://arxiv.org/abs/2503.16508

|

||||

|

||||

---

|

||||

|

||||

## Domain 6: Code Review & Maintenance

|

||||

|

||||

### AI Code Review

|

||||

|

||||

**Authority Rank: 1** | **Perception: Neutral to Positive**

|

||||

|

||||

**Sources:**

|

||||

1. **LinearB** — March 2024

|

||||

2. **Swimm.io** — November 2025

|

||||

3. **CodeAnt.ai** — May 2025

|

||||

|

||||

**Description:**

|

||||

Automated code examination using ML/LLM. Static and dynamic analysis. Identifies bugs, security issues, performance problems, code smells.

|

||||

|

||||

Tools: Qodo, CodeRabbit, SonarQube AI features.

|

||||

|

||||

**Links:**

|

||||

- https://linearb.io/blog/ai-code-review

|

||||

- https://swimm.io/learn/ai-tools-for-developers/ai-code-review-how-it-works-and-3-tools-you-should-know

|

||||

- https://www.codeant.ai/blogs/ai-vs-traditional-code-review

|

||||

|

||||

---

|

||||

|

||||

## Domain 7: Specialized & Emerging Approaches

|

||||

|

||||

### Ensemble Programming/Prompting with AI

|

||||

|

||||

**Authority Rank: 1** | **Perception: Positive - Advanced**

|

||||

|

||||

**Sources:**

|

||||

1. **Kinde.com** — November 2024

|

||||

2. **Ultralytics ML Research** — December 2025

|

||||

3. **arXiv** — June 2025

|

||||

|

||||

**Description:**

|

||||

Multiple AI models/prompts combined for better results. Aggregation methods: voting, averaging, weighted scoring.

|

||||

|

||||

**Links:**

|

||||

- https://kinde.com/learn/ai-for-software-engineering/prompting/ensemble-prompting-that-actually-moves-the-needle/

|

||||

- https://www.ultralytics.com/blog/exploring-ensemble-learning-and-its-role-in-ai-and-ml

|

||||

|

||||

### Prompt Engineering for Development

|

||||

|

||||

**Authority Rank: 2** | **Perception: Neutral to Positive**

|

||||

|

||||

**Sources:**

|

||||

1. **Google Cloud** — January 2026

|

||||

2. **OpenAI** — April 2025

|

||||

3. **GitHub** — May 2024

|

||||

|

||||

**Description:**

|

||||

Crafting effective prompts for AI models. Critical skill for AI-assisted development.

|

||||

|

||||

Techniques: few-shot learning, chain-of-thought, role prompting.

|

||||

|

||||

**Links:**

|

||||

- https://cloud.google.com/discover/what-is-prompt-engineering

|

||||

- https://platform.openai.com/docs/guides/prompt-engineering

|

||||

- https://github.blog/ai-and-ml/generative-ai/prompt-engineering-guide-generative-ai-llms/

|

||||

|

||||

### Intentional AI Development

|

||||

|

||||

**Authority Rank: 3** | **Perception: Positive - Thoughtful**

|

||||

|

||||

**Sources:**

|

||||

1. **Tech.eu** — January 2026

|

||||

2. **ghuntley.com** — August 2025

|

||||

|

||||

**Description:**

|

||||

Purpose-driven AI design. Clear roles and boundaries for AI. Deliberate practice and learning approach.

|

||||

|

||||

**Links:**

|

||||

- https://tech.eu/2026/01/05/adopting-an-intentional-ai-strategy-in-2026/

|

||||

- https://ghuntley.com/play/

|

||||

|

||||

---

|

||||

|

||||

## Domain 8: General & Cross-Cutting Terms

|

||||

|

||||

### AI-Assisted Coding / AI-Assisted Development

|

||||

|

||||

**Authority Rank: 1** | **Perception: Neutral to Positive**

|

||||

|

||||

**Sources:**

|

||||

1. **Wikipedia** — July 2025

|

||||

2. **GitLab** — 2025

|

||||

|

||||

**Description:**

|

||||

Broad umbrella term for AI enhancing software development tasks. Includes code completion, documentation generation, testing, debugging assistance.

|

||||

|

||||

Developer remains in control, reviews all suggestions. Most common adoption pattern globally.

|

||||

|

||||

**Links:**

|

||||

- https://en.wikipedia.org/wiki/AI-assisted_software_development

|

||||

- https://about.gitlab.com/topics/devops/ai-code-generation-guide/

|

||||

|

||||

---

|

||||

|

||||

## Key Takeaways

|

||||

|

||||

**Domain 1** (Experimental): Only Vibe Coding — only term with explicitly negative connotation, backed by high-authority sources (OpenAI founder, Collins Dictionary).

|

||||

|

||||

**Domain 2** (Enterprise): Most authoritative domain with AWS, GitHub Engineering, ThoughtWorks as sources. Focus on production-grade, governance, architecture.

|

||||

|

||||

**Domain 3** (Quality): Research-heavy domain (Galileo AI, Google Cloud, Atlassian) with emphasis on responsible development.

|

||||

|

||||

**Domain 4** (Collaborative): Practical patterns, backed by major platforms (Microsoft/GitHub, Atlassian) and research (arXiv).

|

||||

|

||||

**Domains 5-7**: Workflow integration, code review, specialized techniques — more narrow but important practices.

|

||||

|

||||

**Domain 8**: General term serving as baseline for all other approaches.

|

||||

|

|

@ -1,79 +0,0 @@

|

|||

# Research Index

|

||||

|

||||

Working file for methodology clustering and validation.

|

||||

|

||||

## Source

|

||||

- `perplexity-terminology-research.md` — original Perplexity research (Jan 2025)

|

||||

|

||||

---

|

||||

|

||||

## Methodology Clusters

|

||||

|

||||

### Tier 1: Must Include (High Authority + High Relevance)

|

||||

|

||||

| Term | Source Authority | Link Status | Include? |

|

||||

|------|------------------|-------------|----------|

|

||||

| Vibe Coding | Wikipedia, Collins Dictionary, Andrej Karpathy | ⏳ verify | |

|

||||

| Spec-Driven Development | GitHub, ThoughtWorks, Martin Fowler | ⏳ verify | |

|

||||

| AI-Driven Development Life Cycle | AWS | ⏳ verify | |

|

||||

| Agentic Coding | arXiv, AI Accelerator Institute | ⏳ verify | |

|

||||

| AI Pair Programming | GitHub/Microsoft, GeeksforGeeks | ⏳ verify | |

|

||||

|

||||

### Tier 2: Consider (Good Authority)

|

||||

|

||||

| Term | Source Authority | Link Status | Include? |

|

||||

|------|------------------|-------------|----------|

|

||||

| Architecture-First AI Development | WaveMaker, ITBrief | ⏳ verify | |

|

||||

| Test-Driven Development with AI | Galileo AI, Builder.io | ⏳ verify | |

|

||||

| Human-in-the-Loop (HITL) | Google Cloud, Atlassian | ⏳ verify | |

|

||||

| Prompt-Driven Development | Capgemini, Hexaware | ⏳ verify | |

|

||||

| Quality-First AI Coding | Qodo.ai | ⏳ verify | |

|

||||

|

||||

### Tier 3: Maybe (Lower Authority / Niche)

|

||||

|

||||

| Term | Source Authority | Link Status | Include? |

|

||||

|------|------------------|-------------|----------|

|

||||

| Mobbing with AI | Atlassian | ⏳ verify | |

|

||||

| Copilot-Driven Development | Microsoft/GitHub | ⏳ verify | |

|

||||

| Conversational Coding | Google Cloud, arXiv | ⏳ verify | |

|

||||

| Deterministic AI Development | Augment Code | ⏳ verify | |

|

||||

| Ensemble Programming | Kinde.com, Ultralytics | ⏳ verify | |

|

||||

|

||||

### Tier 4: Skip (Generic / Low Value)

|

||||

|

||||

- AI-Assisted Coding — too generic, umbrella term

|

||||

- AI-Augmented Development — too generic

|

||||

- Prompt Engineering — separate skill, not methodology

|

||||

- AI Code Review — tool category, not methodology

|

||||

|

||||

---

|

||||

|

||||

## Interview Questions Bank

|

||||

|

||||

*Questions to ask Oleg about each methodology*

|

||||

|

||||

1. Vibe Coding — "Ты когда-нибудь так работал? Результат?"

|

||||

2. Spec-Driven — "Пробовал писать spec перед кодом с AI?"

|

||||

3. Agentic — "Как используешь Claude Code в agentic режиме?"

|

||||

4. TDD-AI — "Тесты сначала с AI — работает?"

|

||||

5. HITL — "Как часто AI делает что-то без твоего OK?"

|

||||

|

||||

---

|

||||

|

||||

## Henry's Opinions (from interview)

|

||||

|

||||

*Will be filled during interview*

|

||||

|

||||

---

|

||||

|

||||

## Link Verification Log

|

||||

|

||||

*Track which links checked and status*

|

||||

|

||||

| Link | Status | Notes |

|

||||

|------|--------|-------|

|

||||

| | | |

|

||||

|

||||

---

|

||||

|

||||

*Updated: 2025-01-22*

|

||||

|

|

@ -1,16 +0,0 @@

|

|||

# SEO Metadata

|

||||

|

||||

*pending — will be created after keyword research (step 5)*

|

||||

|

||||

## Title

|

||||

[TBD]

|

||||

|

||||

## Meta Description

|

||||

[TBD]

|

||||

|

||||

## Target Keywords

|

||||

- Primary: [TBD]

|

||||

- Secondary: [TBD]

|

||||

|

||||

## URL Slug

|

||||

ai-coding-methodologies-beyond-vibe-coding

|

||||

|

|

@ -1,3 +0,0 @@

|

|||

# Article Text

|

||||

|

||||

*pending — will be written after outline approval*

|

||||

|

|

@ -1,118 +0,0 @@

|

|||

# Agentic Coding

|

||||

|

||||

## Определение

|

||||

|

||||

**Agentic Coding** — парадигма автономной разработки ПО с высокой степенью автономности AI-агентов: самостоятельное планирование, выполнение, валидация и итеративное улучшение кода с минимальным человеческим вмешательством.

|

||||

|

||||

---

|

||||

|

||||

## Академическое подтверждение

|

||||

|

||||

### arXiv 2508.11126 (август 2025)

|

||||

**["AI Agentic Programming: A Survey of Techniques"](https://arxiv.org/abs/2508.11126)**

|

||||

- **Авторы**: UC San Diego, Carnegie Mellon University

|

||||

- **Охват**: comprehensive survey агентных систем для разработки ПО

|

||||

- **Ключевые концепции**: таксономия агентов, планирование, управление контекстом, multi-agent systems

|

||||

|

||||

### arXiv 2512.14012 (декабрь 2025)

|

||||

**["Professional Software Developers Don't Vibe, They Control"](https://arxiv.org/abs/2512.14012)**

|

||||

- **Авторы**: University of Michigan, UC San Diego

|

||||

- **Методология**: 13 наблюдений + 99 опросов разработчиков (3-25 лет опыта)

|

||||

- **Выводы**: профессионалы используют агентов в контролируемом режиме, plan files, context files, tight feedback loops

|

||||

|

||||

---

|

||||

|

||||

## Ralph Loop

|

||||

|

||||

### История

|

||||

- **Изобретатель**: Geoffrey Huntley

|

||||

- **Первое открытие**: Февраль 2024

|

||||

- **Публичный запуск**: Май 2025

|

||||

- **Viral wave**: Январь 2026

|

||||

|

||||

### Публикации

|

||||

- **[VentureBeat (6 января 2026)](https://venturebeat.com/technology/how-ralph-wiggum-went-from-the-simpsons-to-the-biggest-name-in-ai-right-now)**: "How Ralph Wiggum went from 'The Simpsons' to the biggest name in AI"

|

||||

- **[Dev Interrupted Podcast (12 января 2026)](https://devinterrupted.substack.com/p/inventing-the-ralph-wiggum-loop-creator)**: интервью с Geoffrey Huntley

|

||||

- **[Ralph Wiggum Loop Official Site](https://ralph-wiggum.ai)**

|

||||

- **[LinearB Blog](https://linearb.io/blog/ralph-loop-agentic-engineering-geoffrey-huntley)**: Mastering Ralph loops

|

||||

|

||||

### Суть

|

||||

Bash-цикл с fresh context каждую итерацию: `while :; do cat PROMPT.md | agent; done`

|

||||

|

||||

### Экономика

|

||||

- **Cost**: $10.42/час (Claude Sonnet 4.5, данные Huntley)

|

||||

- **Кейсы**: клонирование HashiCorp Nomad, Tailscale — дни вместо лет

|

||||

|

||||

---

|

||||

|

||||

## Профессиональные инструменты

|

||||

|

||||

### Claude Code

|

||||

- **Статус**: полная поддержка agentic workflows

|

||||

- **Ralph Loop**: [официальная интеграция](https://ralph-wiggum.ai)

|

||||

- **Community workflows**: [Reddit](https://www.reddit.com/r/ClaudeCode/comments/1m5k6ka/i_built_a_specdriven_development_workflow_for/)

|

||||

- **Использование**: bash scripts, MCP, custom slash commands

|

||||

|

||||

### Cursor Composer

|

||||

- **Запуск**: октябрь 2025 (Cursor 2.0)

|

||||

- **Статус**: production-ready multi-agent IDE

|

||||

- **Возможности**: до 8 параллельных агентов, Git worktrees isolation, native browser tool, voice mode

|

||||

- **Ссылка**: [Cursor 2.0 Launch](https://cursor.com/blog/2-0)

|

||||

- **Scaling**: [Long-running autonomous coding](https://cursor.com/blog/scaling-agents) (январь 2026)

|

||||

|

||||

### GitHub Copilot

|

||||

- **Agent Mode** (preview февраль 2025): [synchronous agentic collaborator](https://github.blog/ai-and-ml/github-copilot/agent-mode-101-all-about-github-copilots-powerful-mode/)

|

||||

- **Coding Agent** (preview июль 2025): [asynchronous autonomous agent](https://github.blog/ai-and-ml/github-copilot/from-idea-to-pr-a-guide-to-github-copilots-agentic-workflows/)

|

||||

- **Анонс**: [GitHub Newsroom](https://github.com/newsroom/press-releases/agent-mode) (5 февраля 2025)

|

||||

- **Доступность**: VS Code, Visual Studio, JetBrains, Eclipse, Xcode

|

||||

|

||||

### Другие инструменты

|

||||

- **[Agentic Coding Framework](https://github.com/DafnckStudio/Agentic-Coding-Framework)**: full-cycle automation, GitHub

|

||||

- **Windsurf**: agentic IDE, коммерческий

|

||||

- **Cline**: open-source assistant, VS Code extension

|

||||

|

||||

---

|

||||

|

||||

## Интеграция с Claude Code

|

||||

|

||||

✅ **Полностью поддерживается**

|

||||

|

||||

- Автономное чтение/запись файлов, выполнение terminal commands

|

||||

- [Ralph Loop implementation](https://ralph-wiggum.ai)

|

||||

- [Custom slash commands](https://www.reddit.com/r/ClaudeCode/comments/1m5k6ka/i_built_a_specdriven_development_workflow_for/)

|

||||

- [Практические примеры](https://blog.devgenius.io/ralph-wiggum-with-claude-code-how-people-are-using-it-effectively-1d03d5027285)

|

||||

|

||||

---

|

||||

|

||||

## Минимальный подход без фреймворков

|

||||

|

||||

**Методология**: создай `SPECIFICATION.md` + `IMPLEMENTATION_PLAN.md`, запусти bash loop

|

||||

|

||||

**Принцип**: fresh context каждую итерацию, progress в Git history, агент завершает ОДНУ задачу и выходит

|

||||

|

||||

**Преимущества**: zero dependencies, full control, no context rot, Git-based persistence

|

||||

|

||||

---

|

||||

|

||||

## Ссылки

|

||||

|

||||

### Академические

|

||||

- [arXiv 2508.11126](https://arxiv.org/abs/2508.11126): AI Agentic Programming Survey (август 2025)

|

||||

- [arXiv 2512.14012](https://arxiv.org/abs/2512.14012): Professional Developers Don't Vibe (декабрь 2025)

|

||||

|

||||

### Ralph Loop

|

||||

- [VentureBeat](https://venturebeat.com/technology/how-ralph-wiggum-went-from-the-simpsons-to-the-biggest-name-in-ai-right-now) (январь 2026)

|

||||

- [Ralph Wiggum Official](https://ralph-wiggum.ai)

|

||||

- [LinearB Blog](https://linearb.io/blog/ralph-loop-agentic-engineering-geoffrey-huntley)

|

||||

- [Dev Interrupted Podcast](https://devinterrupted.substack.com/p/inventing-the-ralph-wiggum-loop-creator)

|

||||

|

||||

### Инструменты

|

||||

- [Cursor 2.0](https://cursor.com/blog/2-0) (октябрь 2025)

|

||||

- [GitHub Copilot Agent Mode](https://github.blog/ai-and-ml/github-copilot/agent-mode-101-all-about-github-copilots-powerful-mode/)

|

||||

- [Claude Code Integration](https://blog.devgenius.io/ralph-wiggum-with-claude-code-how-people-are-using-it-effectively-1d03d5027285)

|

||||

- [Agentic Framework GitHub](https://github.com/DafnckStudio/Agentic-Coding-Framework)

|

||||

|

||||

### Дополнительно

|

||||

- [Emergent Mind Overview](https://www.emergentmind.com/topics/agentic-coding)

|

||||

- [Martin Fowler](https://martinfowler.com/articles/exploring-gen-ai/sdd-3-tools.html)

|

||||

- [Cursor Scaling Agents](https://cursor.com/blog/scaling-agents) (январь 2026)

|

||||

|

|

@ -1,401 +0,0 @@

|

|||

# Статистика использования AI инструментов разработчиками (2024-2026)

|

||||

|

||||

## Общая статистика использования AI

|

||||

|

||||

### Динамика использования по годам

|

||||

|

||||

\begin{table}

|

||||

\begin{tabular}{|l|c|c|c|}

|

||||

\hline

|

||||

Метрика & 2024 & 2025 & Изменение \\

|

||||

\hline

|

||||

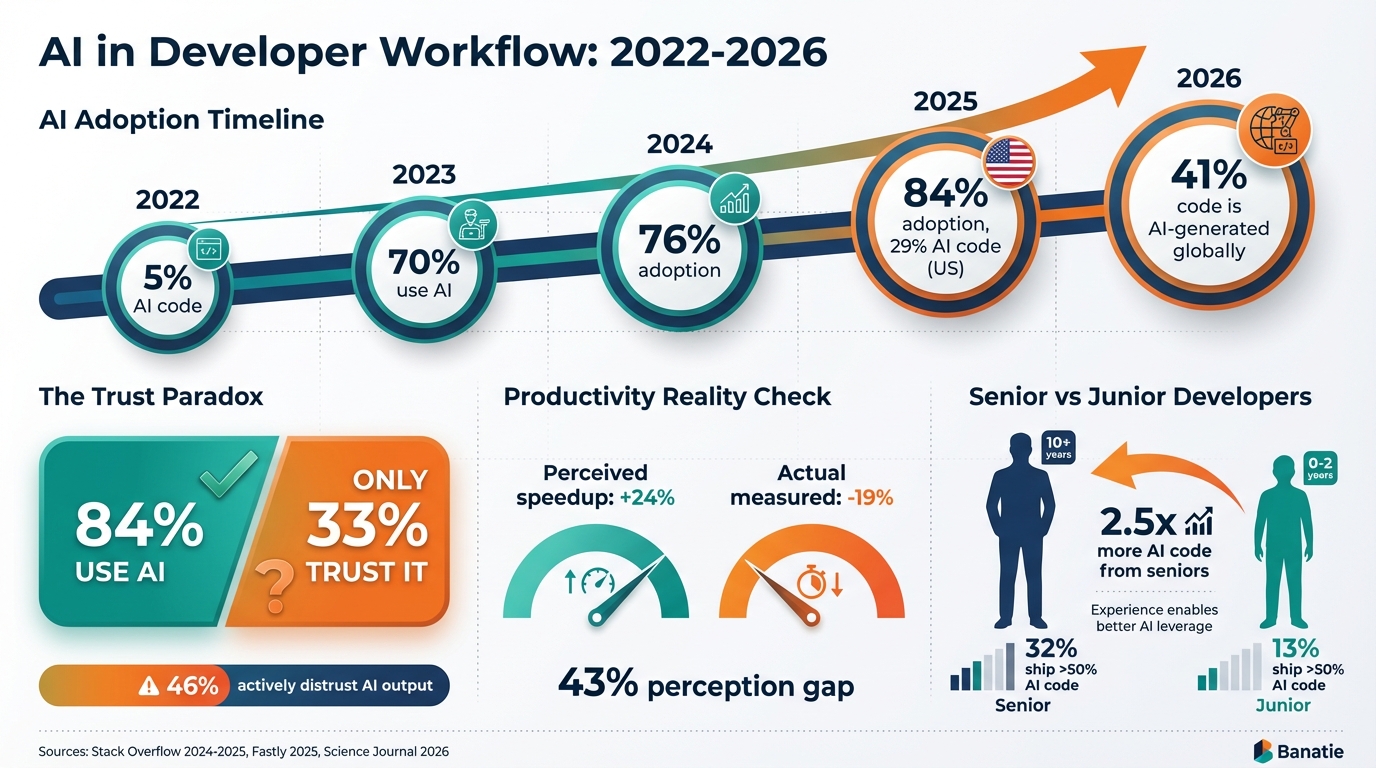

Используют или планируют использовать AI & 76\% & 84\% & +8 п.п. \\

|

||||

\hline

|

||||

Активно используют AI & 62\% & н/д & — \\

|

||||

\hline

|

||||

Используют ежедневно (профессионалы) & н/д & 51\% & — \\

|

||||

\hline

|

||||

Позитивное отношение к AI & 70\%+ & 60\% & -10 п.п. \\

|

||||

\hline

|

||||

Доверяют точности AI & н/д & 33\% & — \\

|

||||

\hline

|

||||

Активно не доверяют AI & н/д & 46\% & — \\

|

||||

\hline

|

||||

\end{tabular}

|

||||

\caption{Сравнение показателей использования AI по данным Stack Overflow 2024 и 2025}

|

||||

\end{table}

|

||||

|

||||

**Источники:**

|

||||

- Stack Overflow Developer Survey 2024[1]

|

||||

- Stack Overflow Developer Survey 2025[2]

|

||||

- Final Round AI Analysis of Stack Overflow 2025[3]

|

||||

|

||||

### Ключевые показатели использования (2025-2026)

|

||||

|

||||

\begin{itemize}

|

||||

\item 84\% разработчиков используют или планируют использовать AI инструменты (Stack Overflow 2025)[2]

|

||||

\item 90\% разработчиков используют AI (DORA Report 2025)[4]

|

||||

\item 85\% регулярно используют AI-инструменты (JetBrains State of Developer Ecosystem 2025)[5]

|

||||

\item 51\% профессиональных разработчиков используют AI ежедневно (Stack Overflow 2025)[2][6]

|

||||

\item 82\% используют ChatGPT[7]

|

||||

\item 68\% используют GitHub Copilot[7]

|

||||

\item 47\% используют Google Gemini[7]

|

||||

\item 41\% используют Claude Code[7]

|

||||

\item 59\% разработчиков используют три и более AI-инструмента параллельно[7][8]

|

||||

\end{itemize}

|

||||

|

||||

**Источники:**

|

||||

- Stack Overflow Developer Survey 2025[2]

|

||||

- DORA Report 2025 (Google Cloud)[4]

|

||||

- JetBrains State of Developer Ecosystem 2025[5]

|

||||

- AI Coding Assistant Statistics 2026[6][7]

|

||||

- Second Talent AI Coding Statistics[8]

|

||||

|

||||

## Доверие к AI инструментам

|

||||

|

||||

### Парадокс использования и доверия

|

||||

|

||||

\begin{table}

|

||||

\begin{tabular}{|l|c|}

|

||||

\hline

|

||||

Показатель доверия & Процент \\

|

||||

\hline

|

||||

Позитивное отношение к AI (2023-2024) & 70\%+ \\

|

||||

\hline

|

||||

Позитивное отношение к AI (2025) & 60\% \\

|

||||

\hline

|

||||

Доверяют точности AI & 33\% \\

|

||||

\hline

|

||||

Активно не доверяют AI & 46\% \\

|

||||

\hline

|

||||

Высоко доверяют результатам AI & 3\% \\

|

||||

\hline

|

||||

Высоко доверяют (опытные разработчики) & 2.6\% \\

|

||||

\hline

|

||||

\end{tabular}

|

||||

\caption{Показатели доверия к AI инструментам}

|

||||

\end{table}

|

||||

|

||||

**Источники:**

|

||||

- Stack Overflow Survey 2025 Analysis[3][9]

|

||||

- Intelligent Tools Analysis[10]

|

||||

|

||||

### Основные проблемы с доверием

|

||||

|

||||

\begin{itemize}

|

||||

\item 66\% разработчиков жалуются на "AI решения, которые почти правильные, но не совсем"[2]

|

||||

\item 45\% считают, что "отладка AI-кода требует больше времени"[2]

|

||||