123 lines

8.5 KiB

Markdown

123 lines

8.5 KiB

Markdown

# AI Pair Programming vs Agentic Coding: Two Extremes of Vibe Coding

|

|

|

|

*Part 2 of the "Beyond Vibe Coding" series*

|

|

|

|

In [Part 1](/henry-devto/what-is-vibe-coding-in-2026), we covered vibe coding and spec-driven development — two ends of the planning spectrum. Now let's explore the autonomy spectrum: how much control do you give the AI?

|

|

|

|

On one end: AI pair programming, where you stay in the driver's seat. On the other: agentic coding, where you set a goal and walk away. Both have their place. Both have their traps.

|

|

|

|

---

|

|

|

|

## AI Pair Programming: Working Together

|

|

|

|

**Credentials:**

|

|

- GitHub official positioning: ["Your AI pair programmer"](https://github.com/features/copilot) (Copilot marketing since 2021)

|

|

- [Microsoft Learn documentation](https://learn.microsoft.com/en-us/industry/mobility/architecture/ai-pair-programmer): AI pair programmer architecture

|

|

- [GitHub Copilot Fundamentals](https://learn.microsoft.com/en-us/training/paths/copilot/) training on Microsoft Learn

|

|

- [Responsible AI practices](https://github.blog/ai-and-ml/github-copilot/responsible-ai-pair-programming-with-github-copilot/) (GitHub Blog)

|

|

- Tools: [GitHub Copilot](https://github.com/features/copilot) (Free/Pro $10/Business $19/Enterprise $39), [Cursor](https://cursor.com), [Windsurf](https://www.codeium.com/windsurf), [Tabnine](https://www.tabnine.com), [AWS CodeWhisperer](https://aws.amazon.com/codewhisperer), [Cody by Sourcegraph](https://sourcegraph.com/cody)

|

|

- Claude Code positioning: ["Your AI Pair Programming Assistant"](https://claudecode.org) with [output styles for pair programming](https://shipyard.build/blog/claude-code-output-styles-pair-programming/) (September 2025)

|

|

- 720 monthly searches for "ai pair programming"

|

|

|

|

**The promise:**

|

|

|

|

AI as collaborative partner, not just autocomplete. Continuous suggestions while coding. Context-aware completions. Real-time feedback and alternatives. More than tab-completion — understanding project context.

|

|

|

|

**My honest experience:**

|

|

|

|

I've tried AI autocomplete multiple times. Each time, I ended up disabling it completely.

|

|

|

|

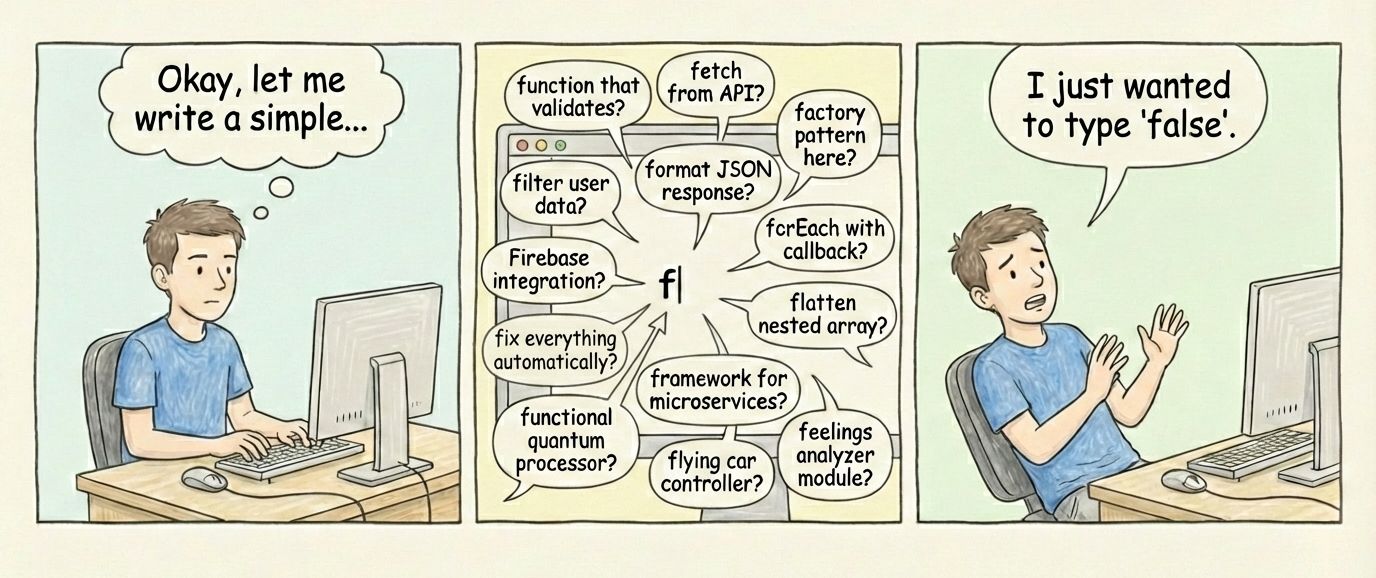

Why? When I'm writing code, I've already mentally worked out what I want. AI suggesting my next line just interrupts my thought process. Standard IDE completions always worked fine for me.

|

|

|

|

I know many developers love it. Just doesn't fit my workflow.

|

|

|

|

|

|

|

|

**Where I find real pair programming:**

|

|

|

|

Claude Desktop with good system instructions plus Filesystem MCP to read actual project files. That's when I feel like I'm working WITH someone who understands my problem and actually helps solve it.

|

|

|

|

Autocomplete is reactive. Real pair programming is proactive — discussion, exploration, questioning assumptions.

|

|

|

|

**The productivity numbers:**

|

|

|

|

GitHub claims 56% faster task completion with AI assistants. Their study shows Copilot users complete 126% more projects per week. Sounds great.

|

|

|

|

But here's counter-evidence: METR study found experienced open-source developers took 19% LONGER to complete tasks when using AI tools. Completely contradicts the marketing.

|

|

|

|

The truth probably depends on context. AI effectiveness varies wildly by task type, developer skill with AI tools, and workflow fit. Not universally faster, not universally slower.

|

|

|

|

---

|

|

|

|

## Agentic Coding: High Autonomy

|

|

|

|

**Credentials:**

|

|

- Academic research: [arXiv 2508.11126](https://arxiv.org/abs/2508.11126) "AI Agentic Programming: A Survey of Techniques" (UC San Diego, Carnegie Mellon, August 2025) — comprehensive taxonomy of agent systems

|

|

- [arXiv 2512.14012](https://arxiv.org/abs/2512.14012) "Professional Software Developers Don't Vibe, They Control" (University of Michigan, December 2025) — 13 observations + 99 developer surveys showing professionals use agents in controlled mode with plan files and tight feedback loops

|

|

- Tools: [Claude Code](https://claude.ai/code), [Cursor 2.0 Composer](https://cursor.com/blog/2-0) (October 2025, up to 8 parallel agents, Git worktrees isolation), [GitHub Copilot Agent Mode](https://github.blog/ai-and-ml/github-copilot/agent-mode-101-all-about-github-copilots-powerful-mode/) (preview February 2025), [Copilot Coding Agent](https://github.blog/ai-and-ml/github-copilot/from-idea-to-pr-a-guide-to-github-copilots-agentic-workflows/) (asynchronous, July 2025)

|

|

- [Cursor Scaling Agents](https://cursor.com/blog/scaling-agents) (January 2026): long-running autonomous coding

|

|

- Open-source: [Agentic Coding Framework](https://github.com/DafnckStudio/Agentic-Coding-Framework) on GitHub

|

|

|

|

**What it is:**

|

|

|

|

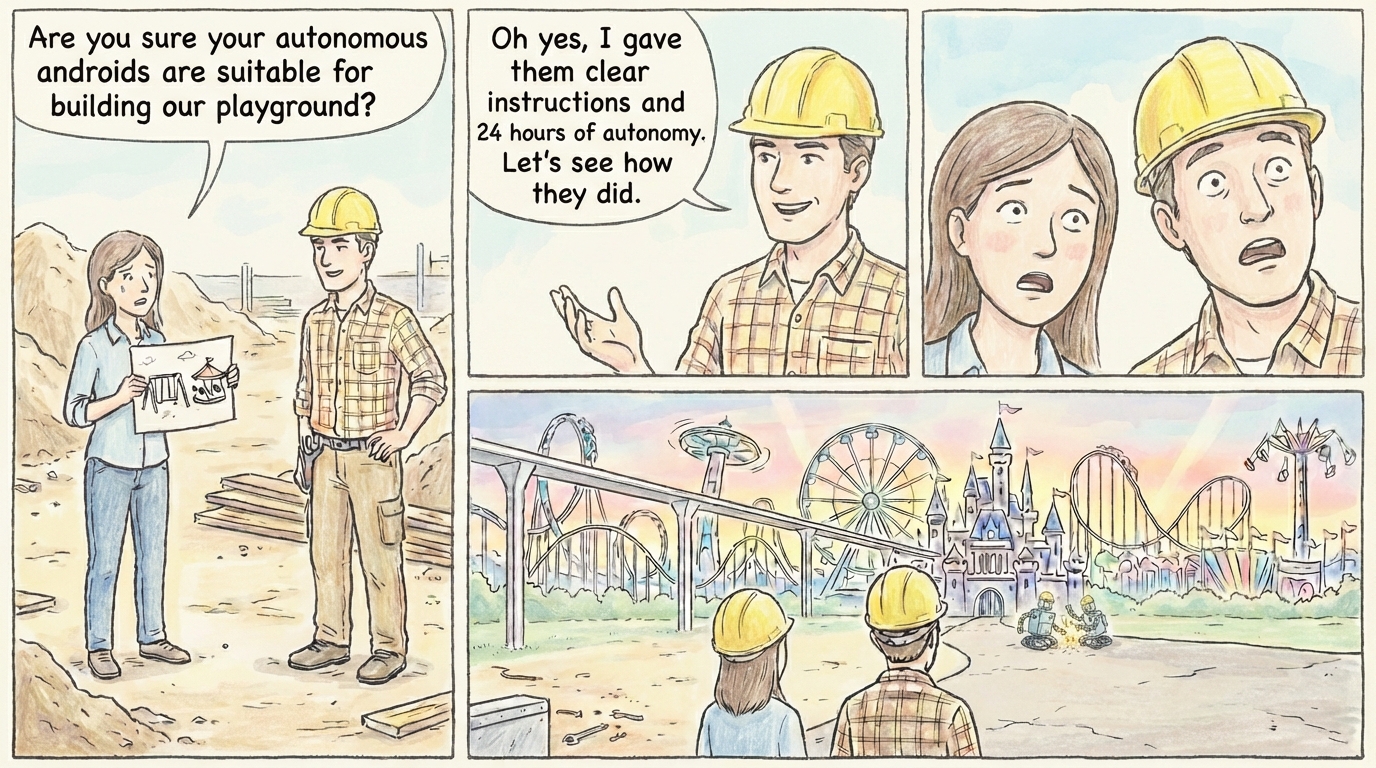

Agent operates with high autonomy. Human sets high-level goals, agent figures out implementation. Agent can plan, execute, debug, iterate without constant approval.

|

|

|

|

Different from vibe coding: agentic coding is systematic. Agent creates a plan, executes it methodically, can course-correct. Vibe coding is reactive prompting without structure.

|

|

|

|

My take? Skeptical so far.

|

|

|

|

I'd like to believe in this approach. The idea of extended autonomous sessions sounds amazing. But here's my question: what tasks justify that much autonomous work?

|

|

|

|

Writing a detailed spec takes me longer than executing it. If Claude Code finishes in 10 minutes after I've spent hours on specification, why would I need 14 hours of autonomy?

|

|

|

|

I'm skeptical about applications in my projects. Maybe it works for certain domains — large refactors, extensive testing, documentation generation across huge codebases? But even then, I can't imagine Claude Code not handling it in an hour.

|

|

|

|

|

|

|

|

**The Ralph Loop extreme:**

|

|

|

|

Named after Ralph Wiggum from The Simpsons. The concept: give the agent a task, walk away, return to finished work.

|

|

|

|

- **Creator**: Geoffrey Huntley ([ghuntley.com/ralph](https://ghuntley.com/ralph/))

|

|

- **Timeline**: First discovery February 2024 → Public launch May 2025 → [Viral wave January 2026](https://venturebeat.com/technology/how-ralph-wiggum-went-from-the-simpsons-to-the-biggest-name-in-ai-right-now) (VentureBeat: "How Ralph Wiggum went from 'The Simpsons' to the biggest name in AI")

|

|

- **Interviews**: [Dev Interrupted Podcast](https://devinterrupted.substack.com/p/inventing-the-ralph-wiggum-loop-creator) (January 12, 2026), [LinearB Blog](https://linearb.io/blog/ralph-loop-agentic-engineering-geoffrey-huntley)

|

|

- **Official plugin**: [ralph-wiggum.ai](https://ralph-wiggum.ai) from Anthropic (Boris Cherny)

|

|

- **Economics**: $10.42/hour with Claude Sonnet 4.5 (per Huntley's data)

|

|

- **Case studies**: cloning HashiCorp Nomad, Tailscale — days instead of years

|

|

|

|

The loop is elegantly simple: `while :; do cat PROMPT.md | agent; done` — fresh context each iteration, progress tracked in Git. Huntley reported 14-hour autonomous sessions.

|

|

|

|

If you've found great applications for Ralph Loop, I'm genuinely curious. Share your wins in the comments.

|

|

|

|

**The permissions reality:**

|

|

|

|

Agentic coding hits a wall in practice: permissions. Claude Code asks approval for every file write, API call, terminal command. Completely breaks flow. Kills the autonomy promise.

|

|

|

|

My workarounds: I ask Claude to add all MCP tools to `.claude/settings.json` proactively — that reduces interruptions. Sometimes I run with `--dangerously-skip-permissions`, but keep an eye on what's happening.

|

|

|

|

Try to set up your environment so the agent can't do anything that git reset couldn't fix. This is clearly a problem waiting for a solution. We need better ways to control coding agent actions.

|

|

|

|

---

|

|

|

|

## Vibe Coding vs Agentic Coding: The Difference

|

|

|

|

People often confuse these. Here's how I see it:

|

|

|

|

**Vibe coding**: reactive. Prompt → result → prompt → result. No plan, no structure, just vibes.

|

|

|

|

**Agentic coding**: systematic. Goal → plan → execute → validate → iterate. Structure exists, AI manages it.

|

|

|

|

Both can produce working code. The difference is predictability. Agentic coding with a good spec gives you reproducible results. Vibe coding gives you... vibes.

|

|

|

|

---

|

|

|

|

## What's Next

|

|

|

|

In Part 3, we'll cover the guardrails: Human-in-the-Loop patterns (strategic checkpoints, not endless permissions) and TDD + AI (tests as specification, quality first).

|

|

|

|

When stakes are high, vibes aren't enough. You need structure that catches mistakes before they ship.

|

|

|

|

---

|

|

|

|

*Do you use agentic coding? Have you tried Ralph Loop? I'm skeptical but curious — what applications actually work? Share in the comments.*

|